Learning from Bats: A Different Way to Navigate

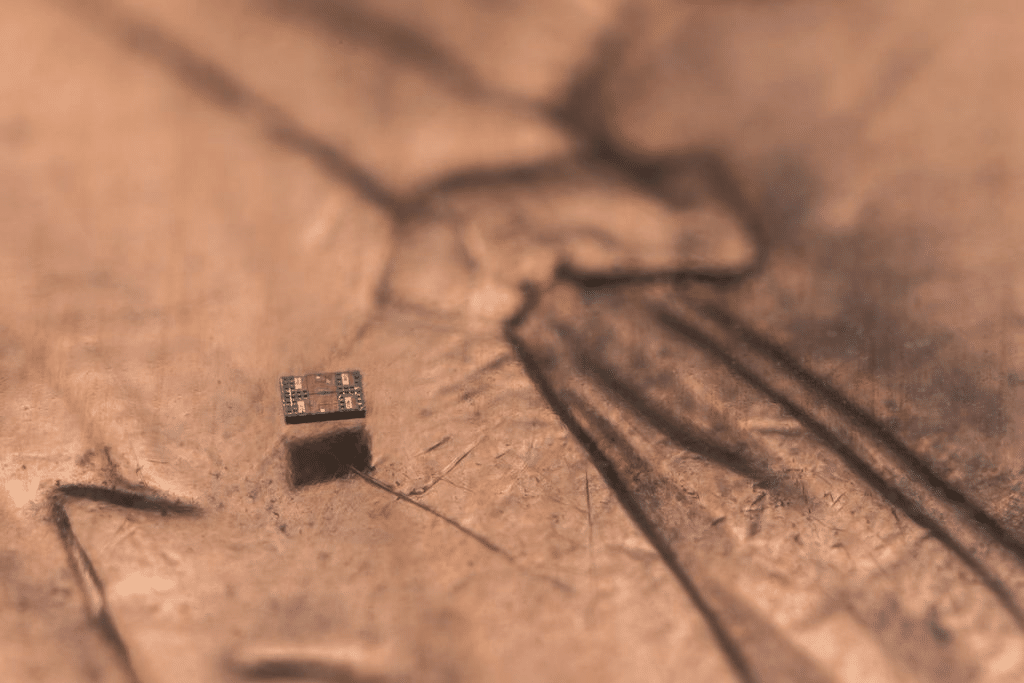

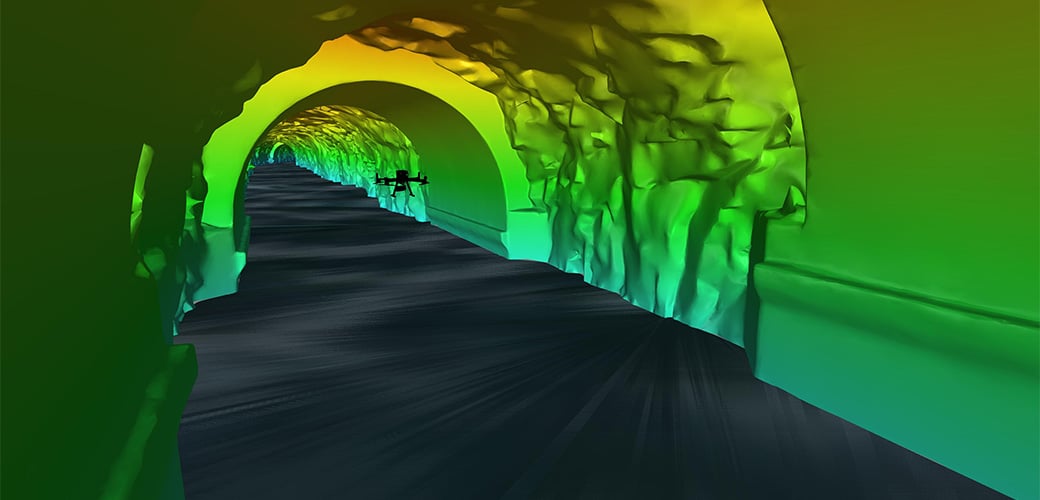

Bats navigate complex environments using echolocation. They emit sound waves and interpret the returning echoes to understand their surroundings. WPI researchers applied this concept to drones using:- Ultrasound sensors to emit and detect sound waves

- Acoustic shielding to reduce interference from propeller noise

- Deep learning models to interpret weak echo signals

Why Traditional Drone Sensors Fall Short

Most autonomous drones today depend on:- Cameras and computer vision

- Radar systems

- LiDAR

- Higher cost and weight

- Greater computational demand

- Increased power consumption

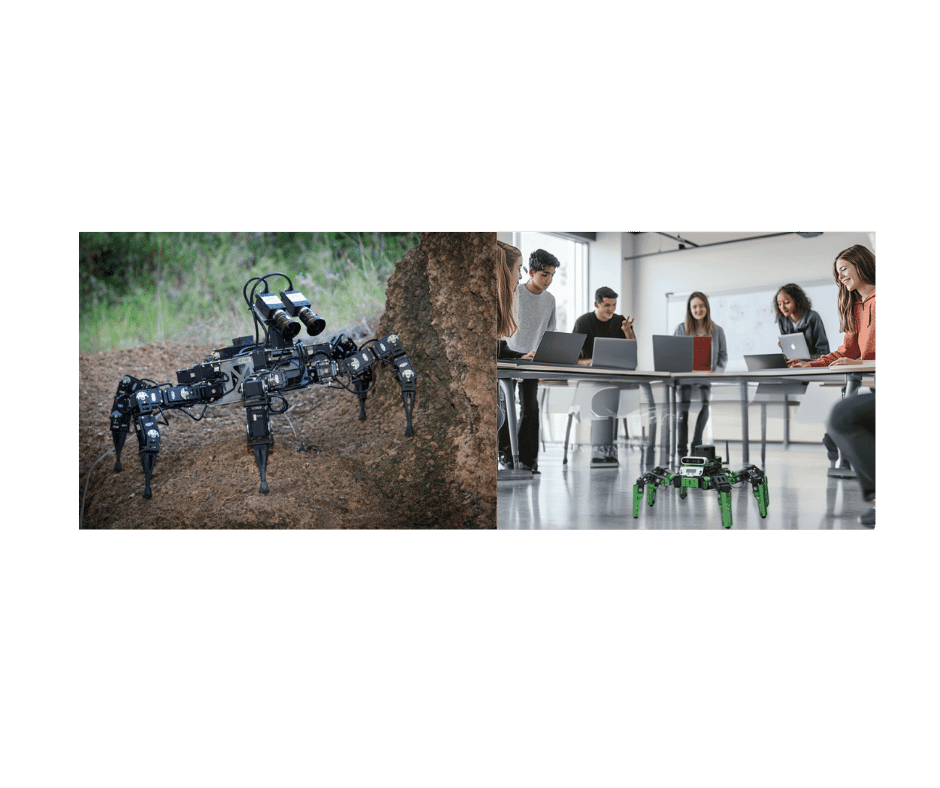

Testing in Real-World Conditions

The WPI team tested their drone in both indoor and outdoor environments designed to simulate real challenges. The drone successfully navigated:- Dark indoor spaces with low visibility

- Outdoor wooded areas with uneven terrain

- Fog- and snow-filled obstacle courses

What This Means for Search and Rescue

In emergency scenarios, visibility is often compromised. Smoke from fires, dust from collapsed structures, or low-light conditions can prevent drones from operating effectively. Ultrasound-based navigation introduces a few key advantages:- Operation in zero-visibility environments

- Reduced system weight, allowing smaller drones

- Lower power usage, extending flight time

A Shift Toward Lightweight, Efficient Robotics

This research reflects a broader trend in robotics: designing systems that do more with less. Instead of adding more sensors and processing power, engineers are:- Taking inspiration from nature

- Using AI to interpret minimal data efficiently

- Simplifying hardware requirements

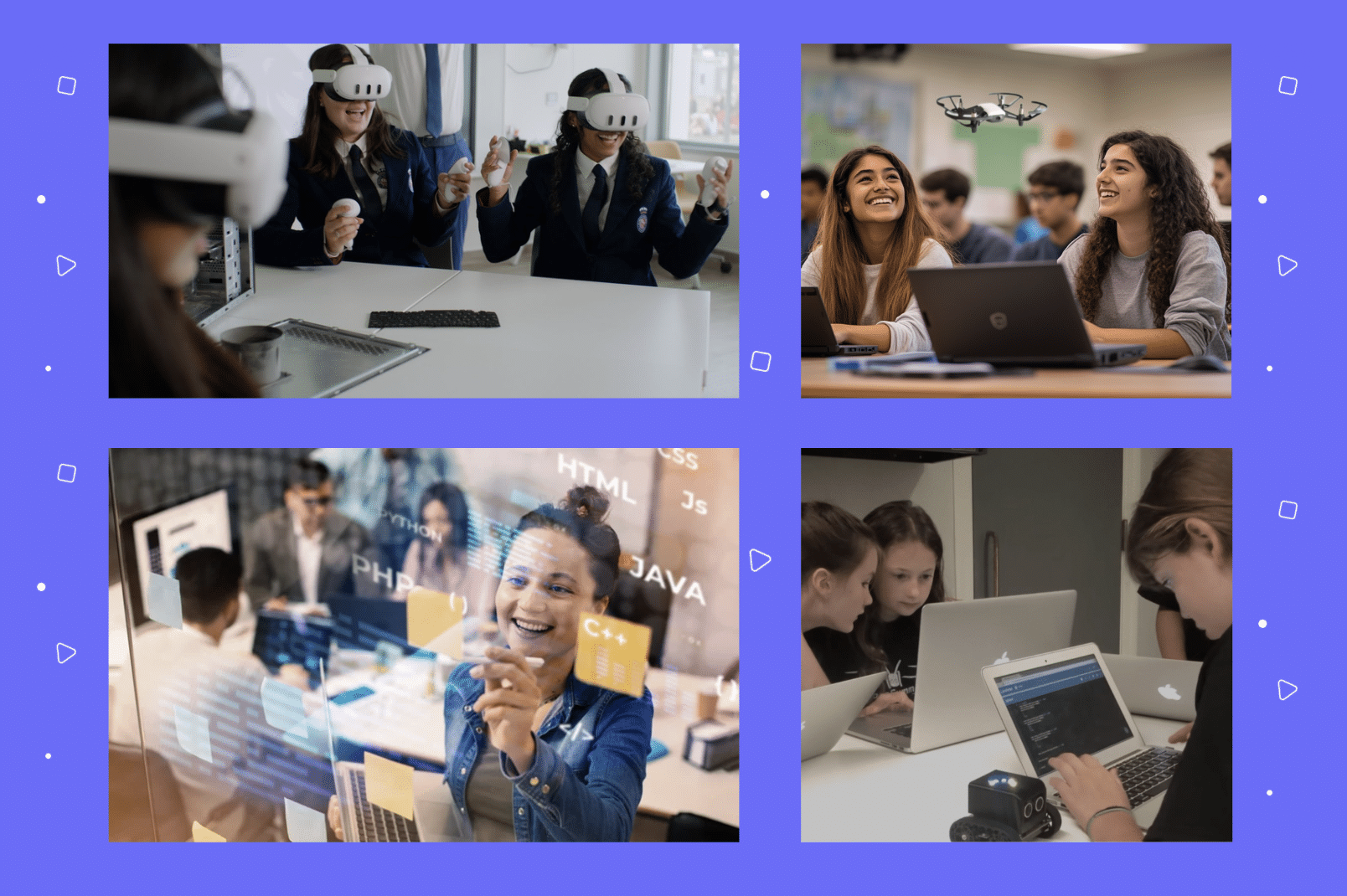

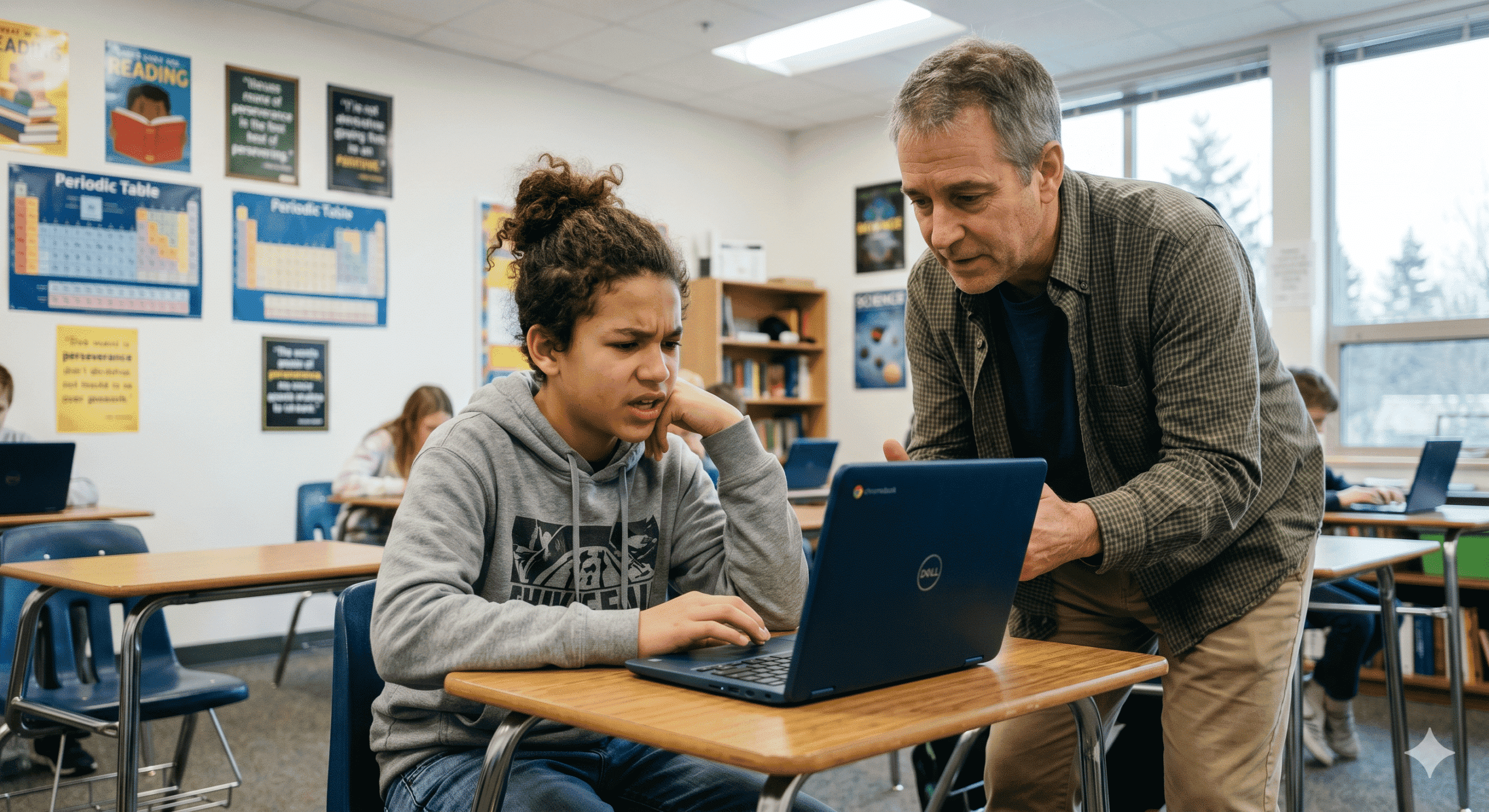

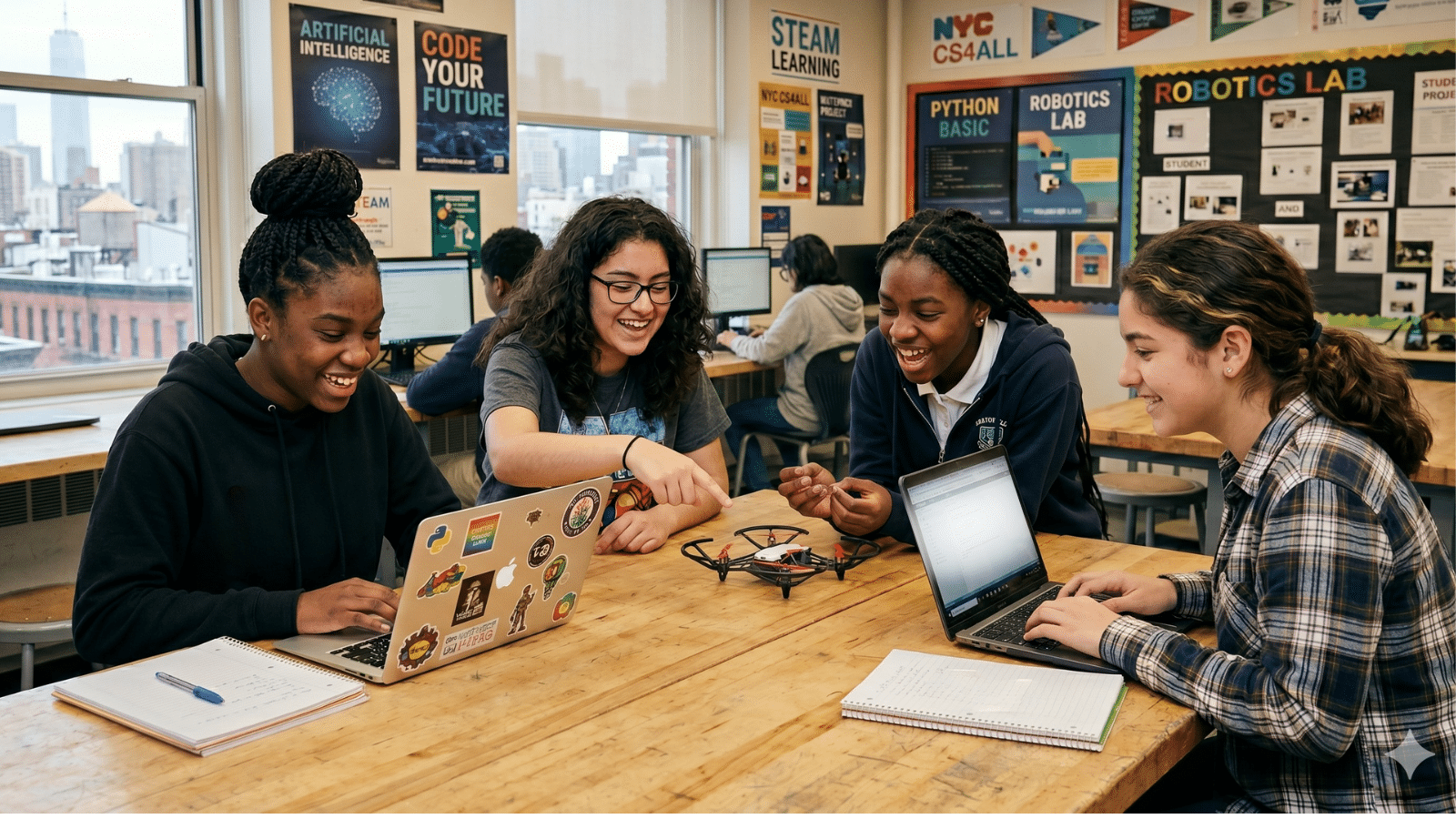

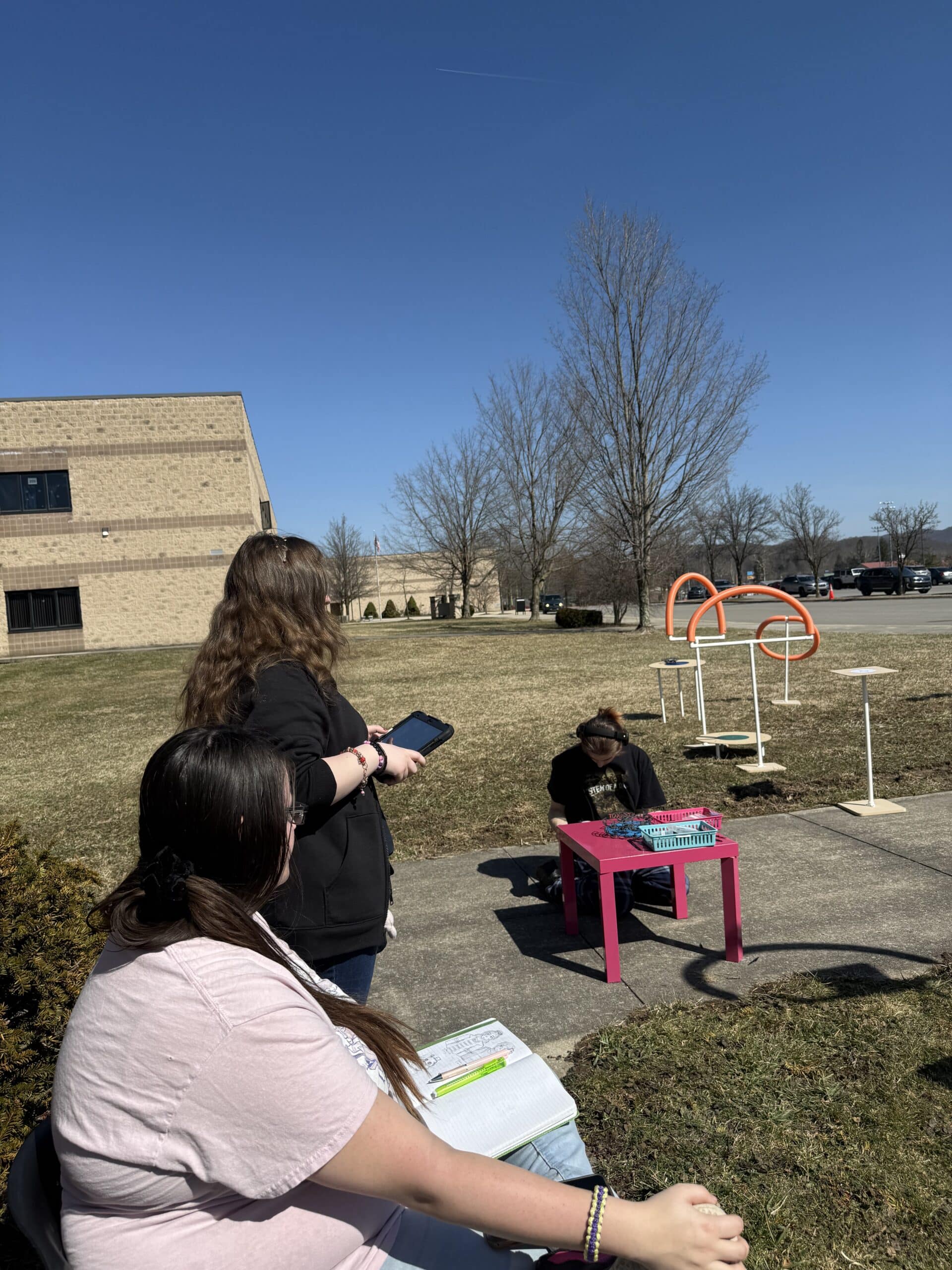

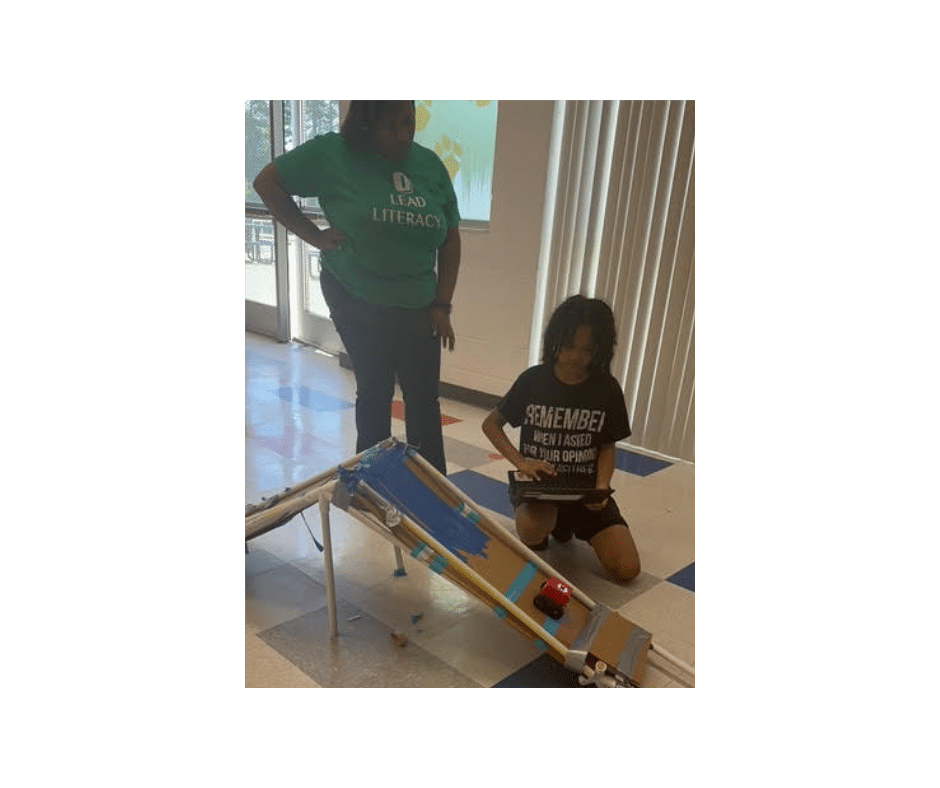

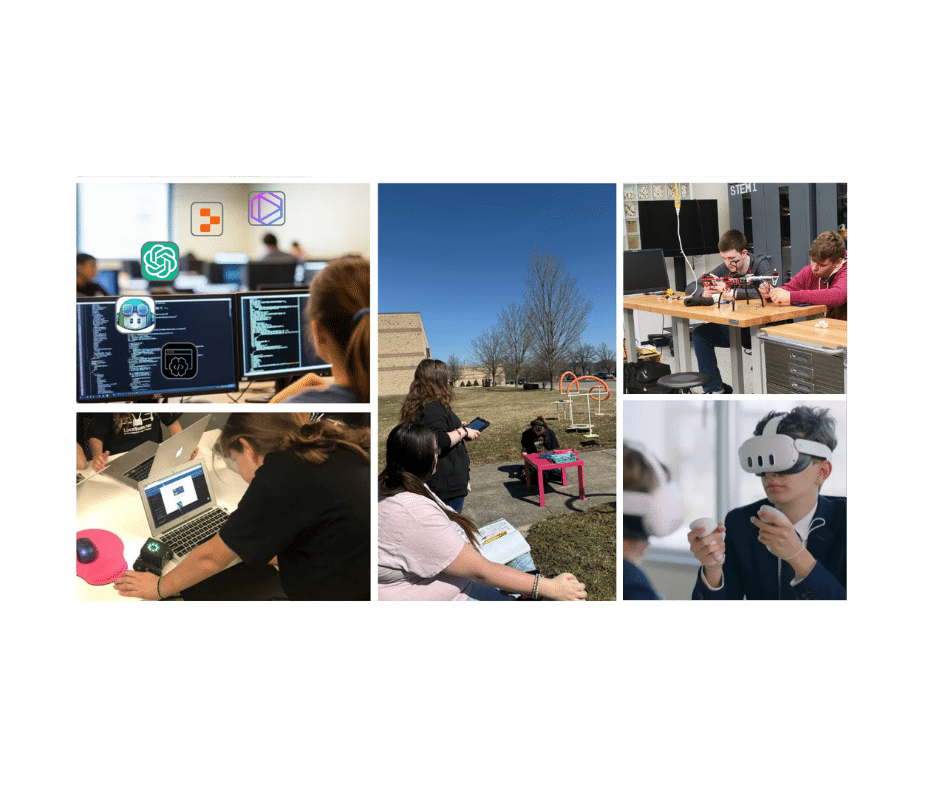

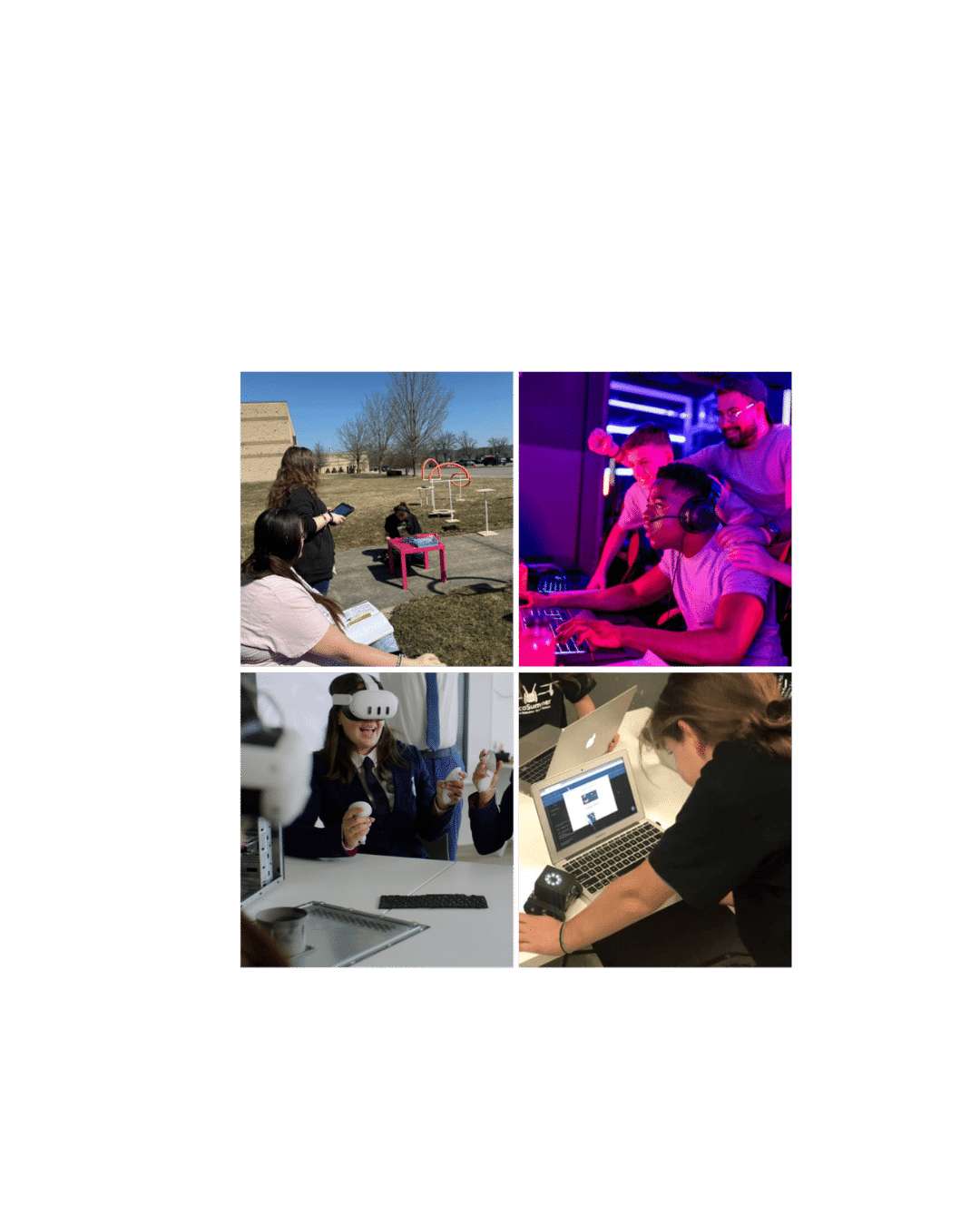

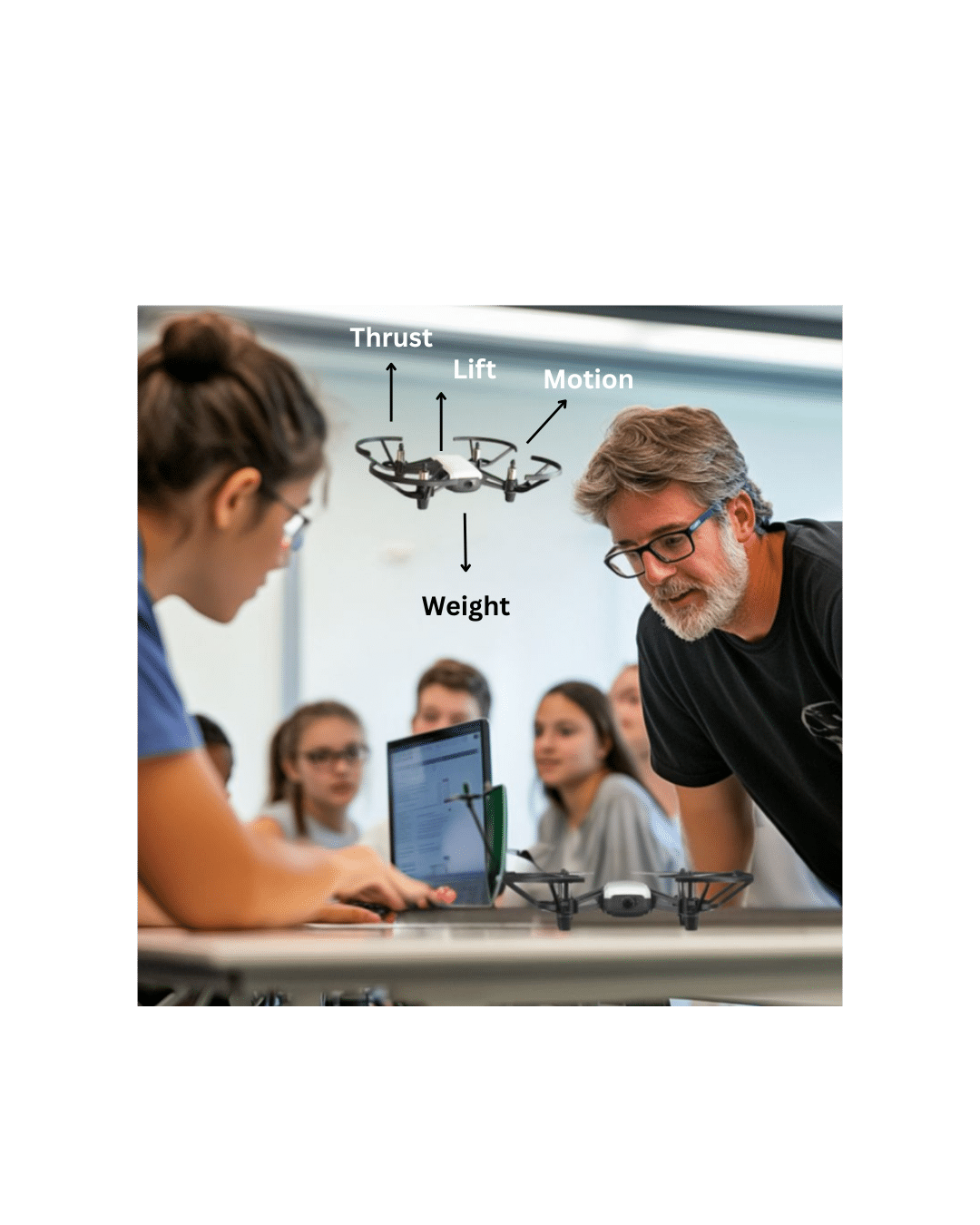

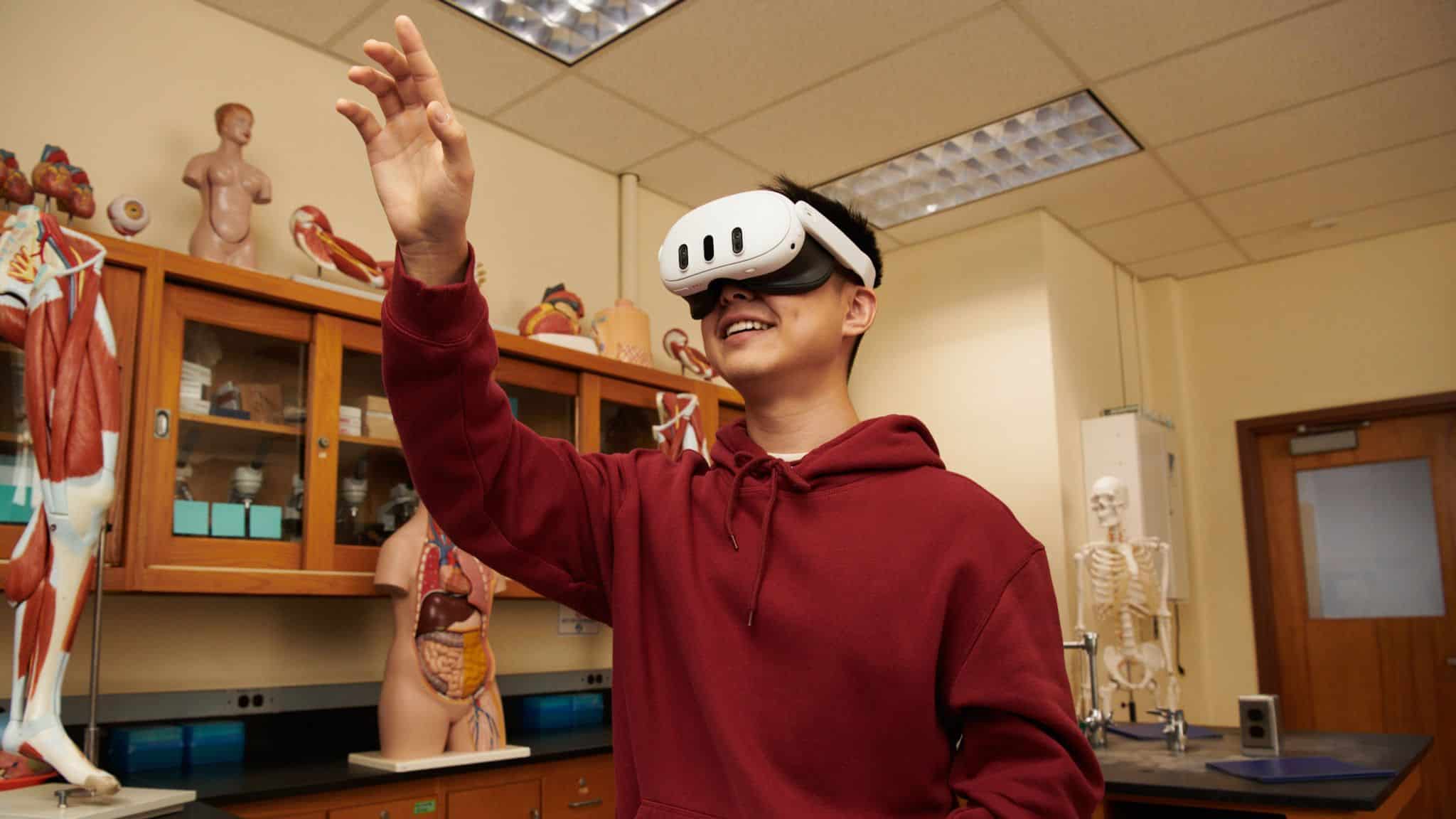

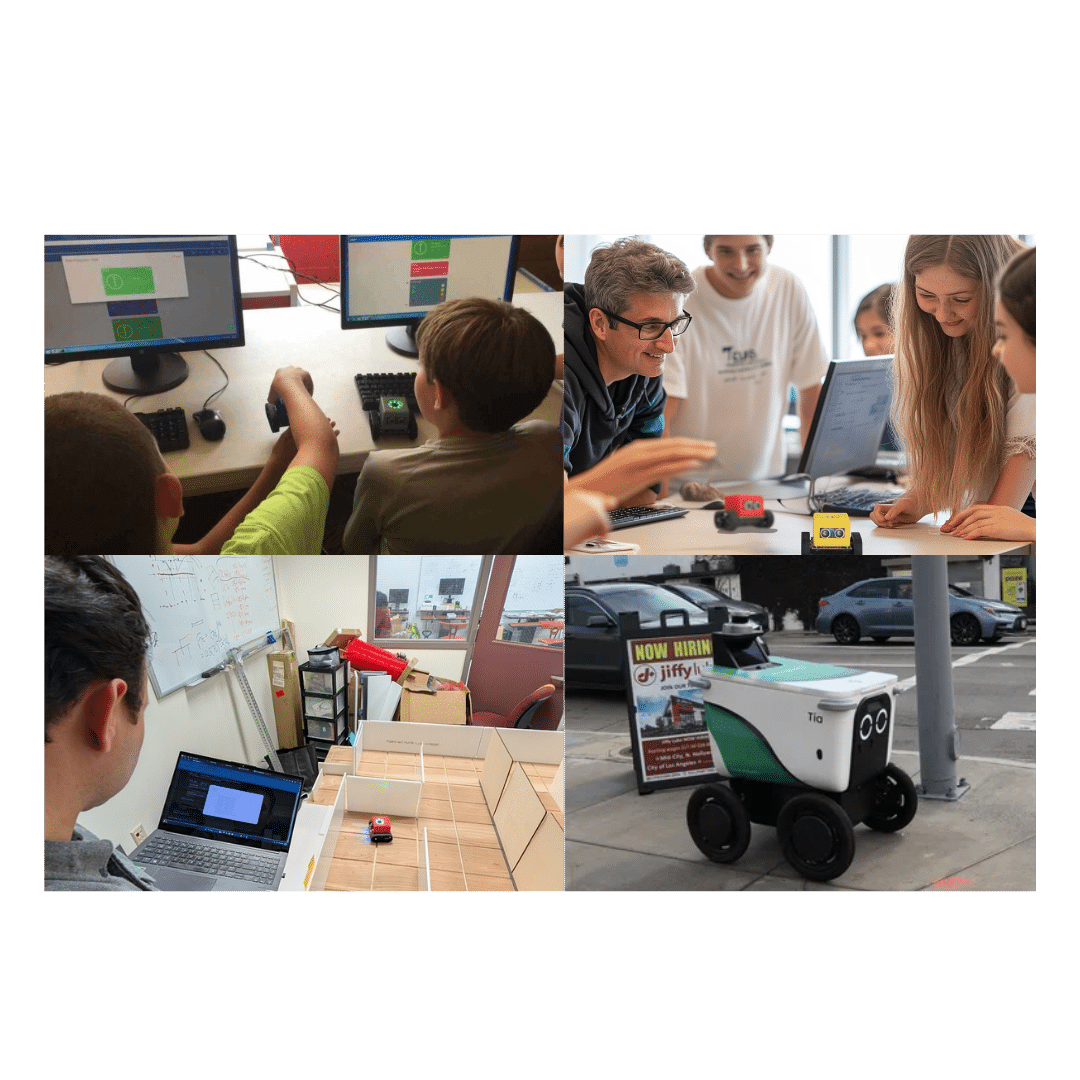

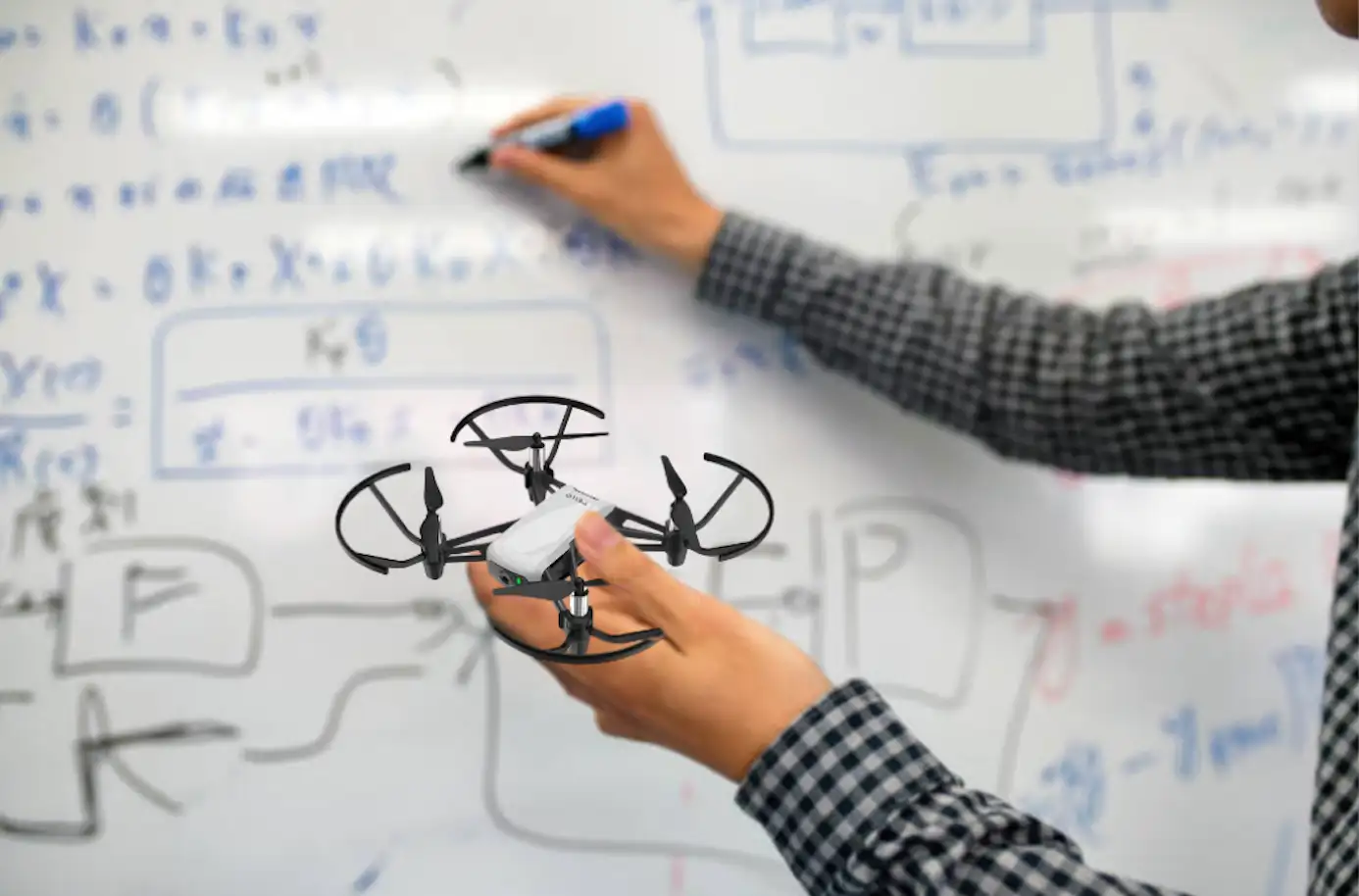

Bringing These Concepts into the Classroom

Students exploring robotics in the classroom today are learning how systems perceive the world. Concepts from this research can translate directly into classroom learning:- Sensor-based navigation vs vision-based systems

- Tradeoffs between power, weight, and performance

- Bio-inspired engineering design

- How AI models interpret real-world data

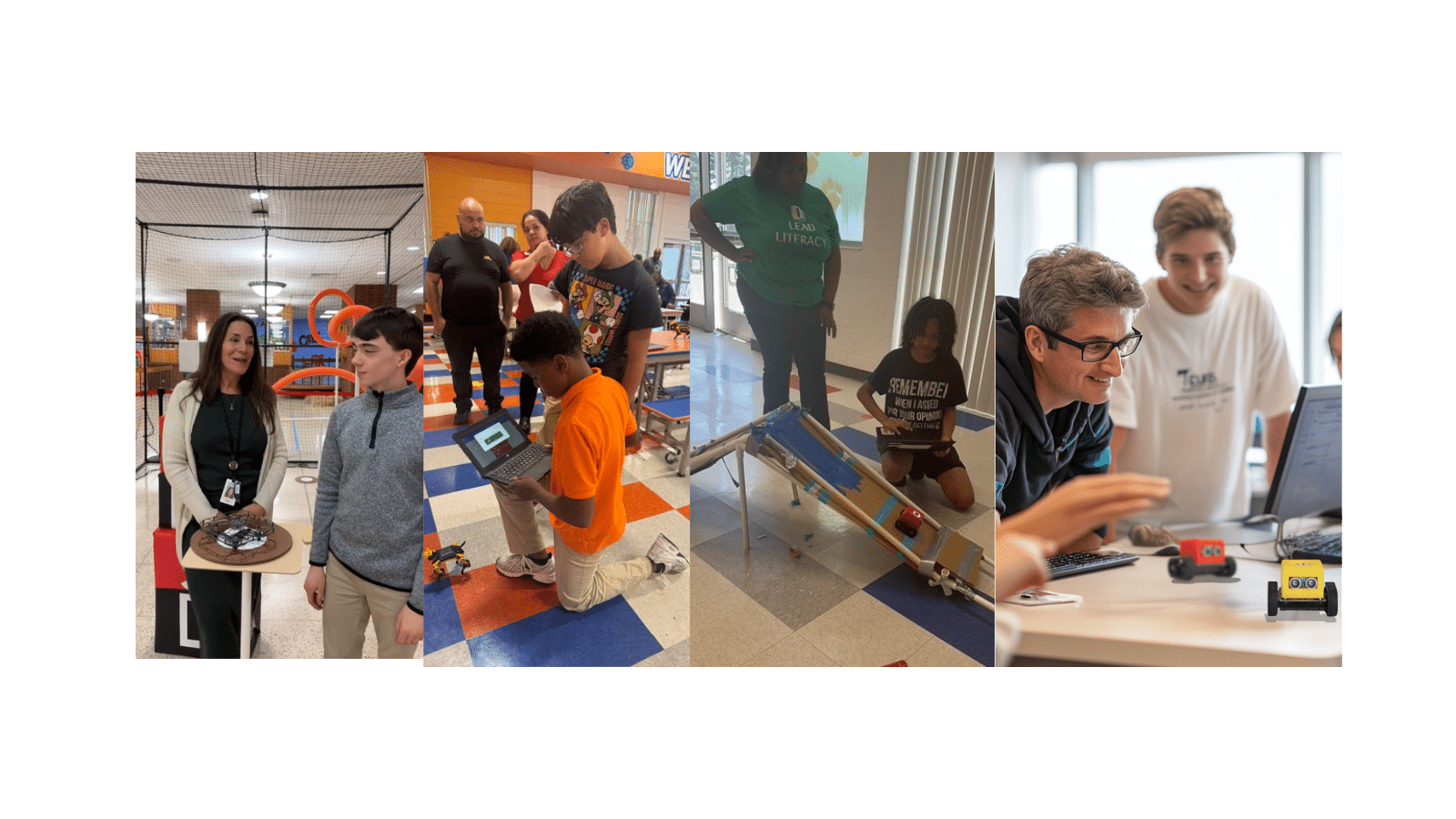

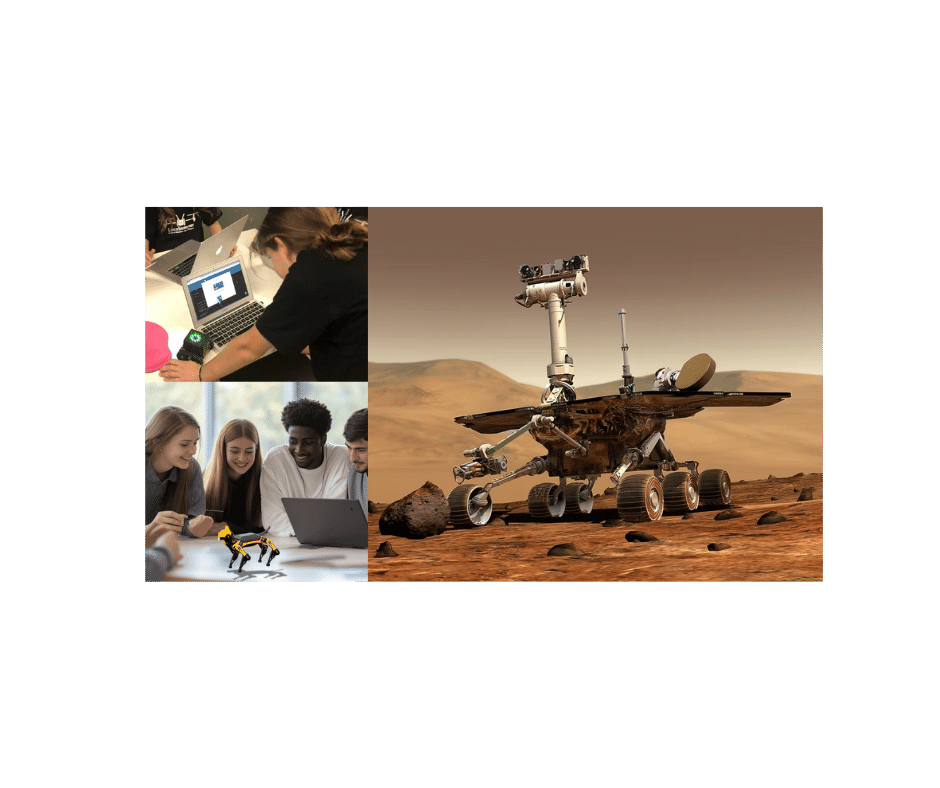

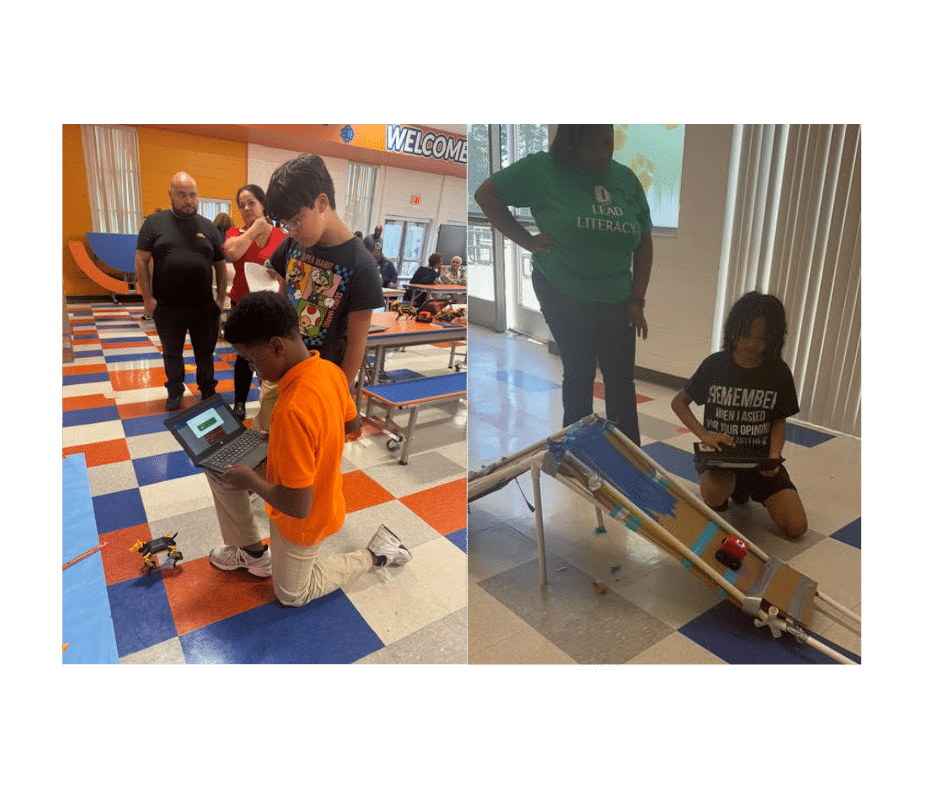

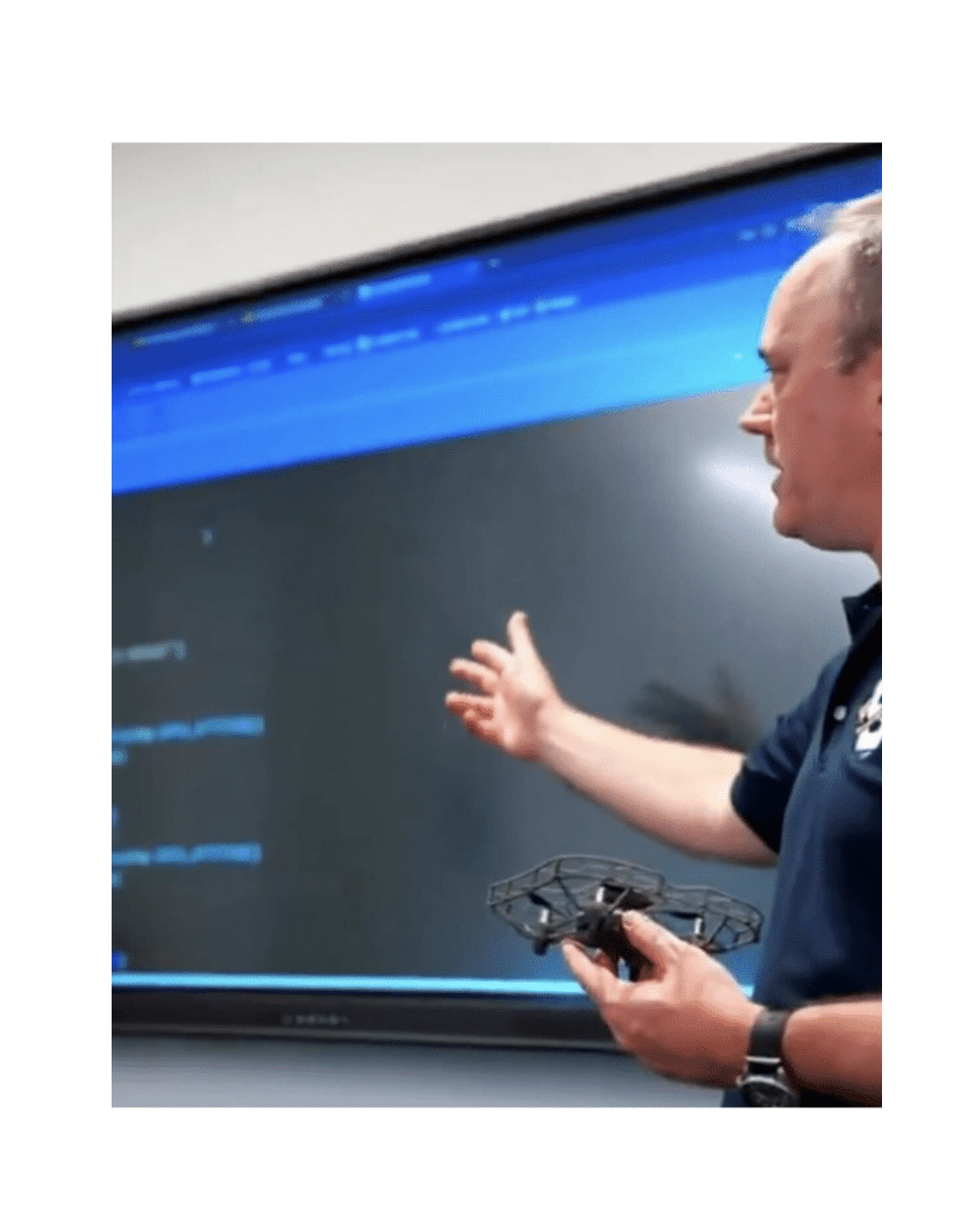

How LocoRobo Supports K12 Robotics Learning

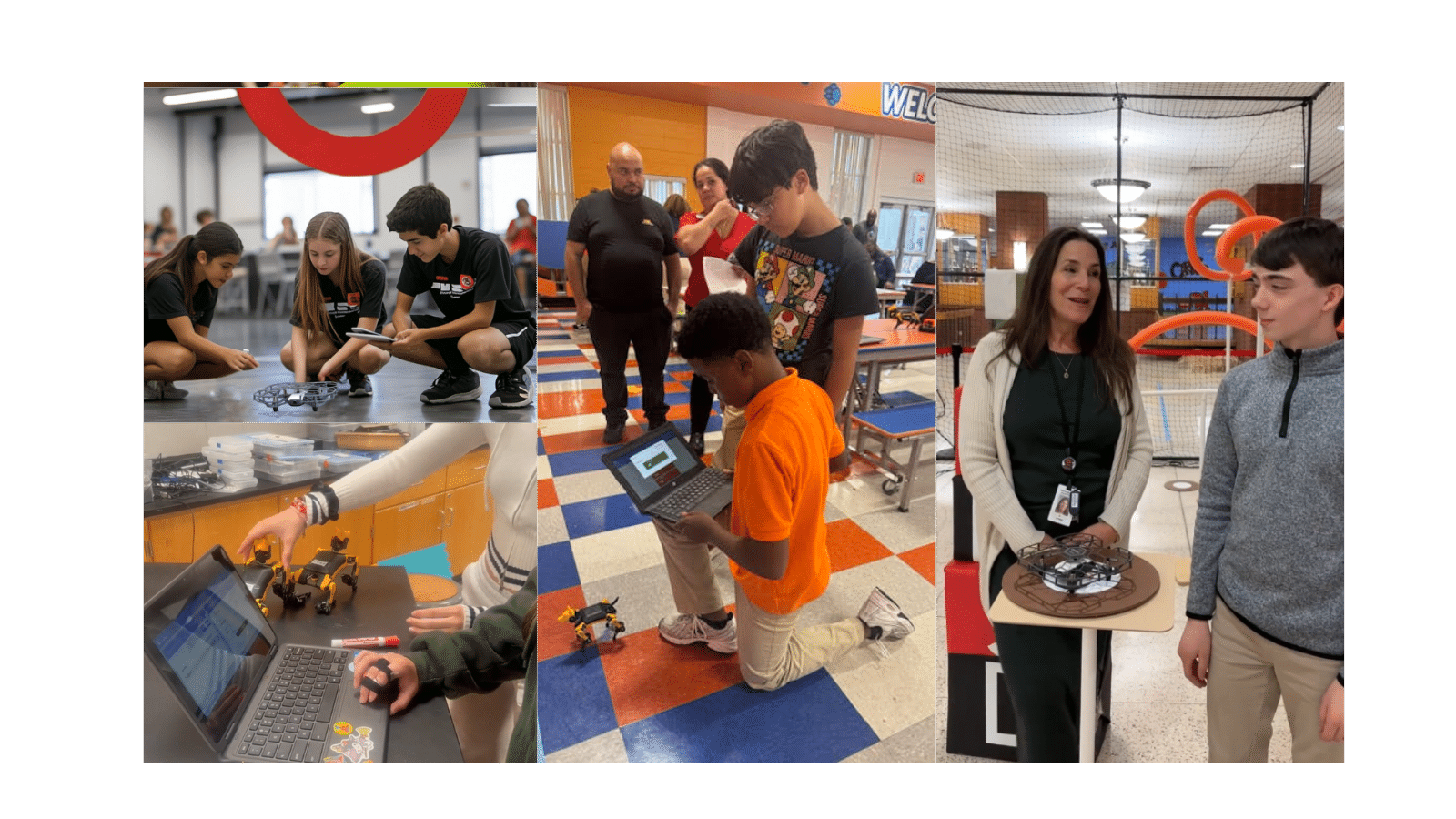

LocoRobo’s STEM robotics solutions are designed to help students explore these concepts through hands-on projects and structured learning pathways. Students can move from foundational coding to advanced robotics concepts, including decision-making, perception, and real-world problem solving. Each platform is supported by robotics curriculum and educator training, making it easier to bring advanced robotics topics into the classroom without adding complexity for teachers. Explore how schools are building robotics programs with LocoRobo.Frequently Asked Questions

How do ultrasound sensors help drones navigate?

Ultrasound sensors emit sound waves and measure the time it takes for echoes to return. This helps drones detect obstacles and understand distance, even in low-visibility environments where cameras fail.

Why is ultrasound better than cameras in fog or smoke?

Cameras rely on light, which is disrupted by fog, smoke, or darkness. Ultrasound uses sound waves, which can travel through these conditions more reliably, allowing drones to maintain navigation.

What are the limitations of ultrasound-based navigation?

Ultrasound struggles with very thin objects like wires or small branches because they reflect weaker signals. Combining ultrasound with other sensors can help improve accuracy.

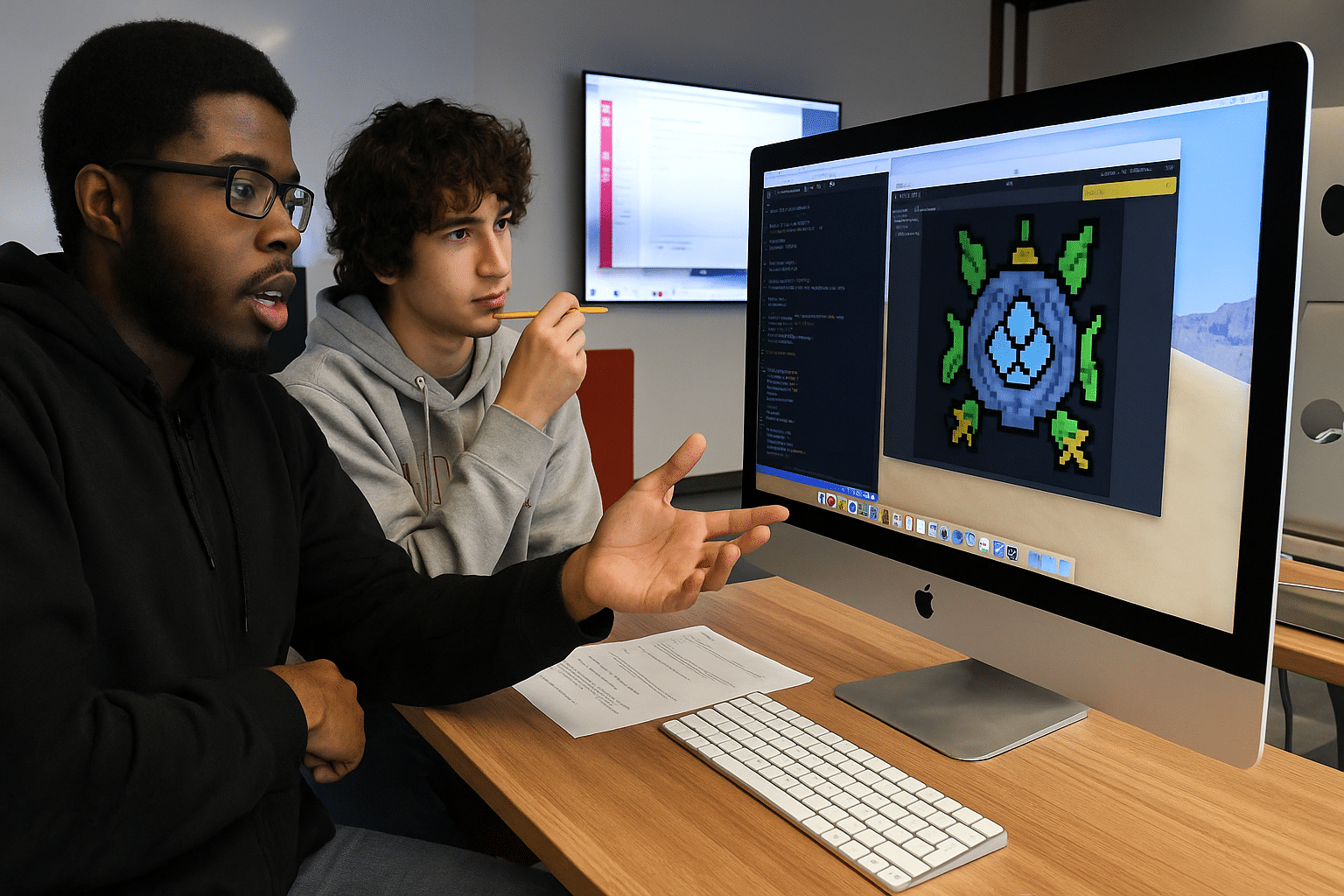

How is AI used in ultrasound navigation?

AI models, particularly deep learning, help interpret weak and complex echo signals. This allows drones to make decisions based on sound data, similar to how bats process echolocation.

How can students learn these concepts in school robotics programs?

Students can explore sensor-based navigation, AI decision-making, and autonomous movement using robotics platforms like LocoRobo. These systems provide hands-on experience with the same principles used in real-world robotics research.