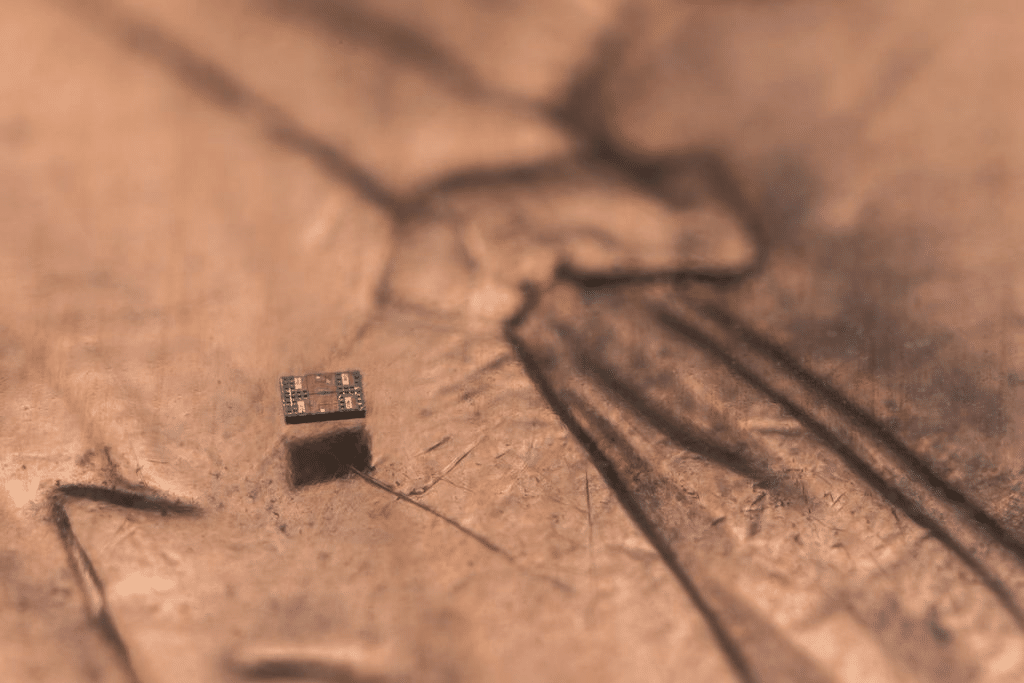

From city streets to science classrooms, autonomous systems are becoming an everyday reality. Self-driving cars navigate traffic. Robotic dogs assist in search and rescue. And at the heart of it all? Sensors—small but powerful technologies enabling machines to perceive and respond to their environment.

Sensors: The Eyes and Ears of Autonomous Machines

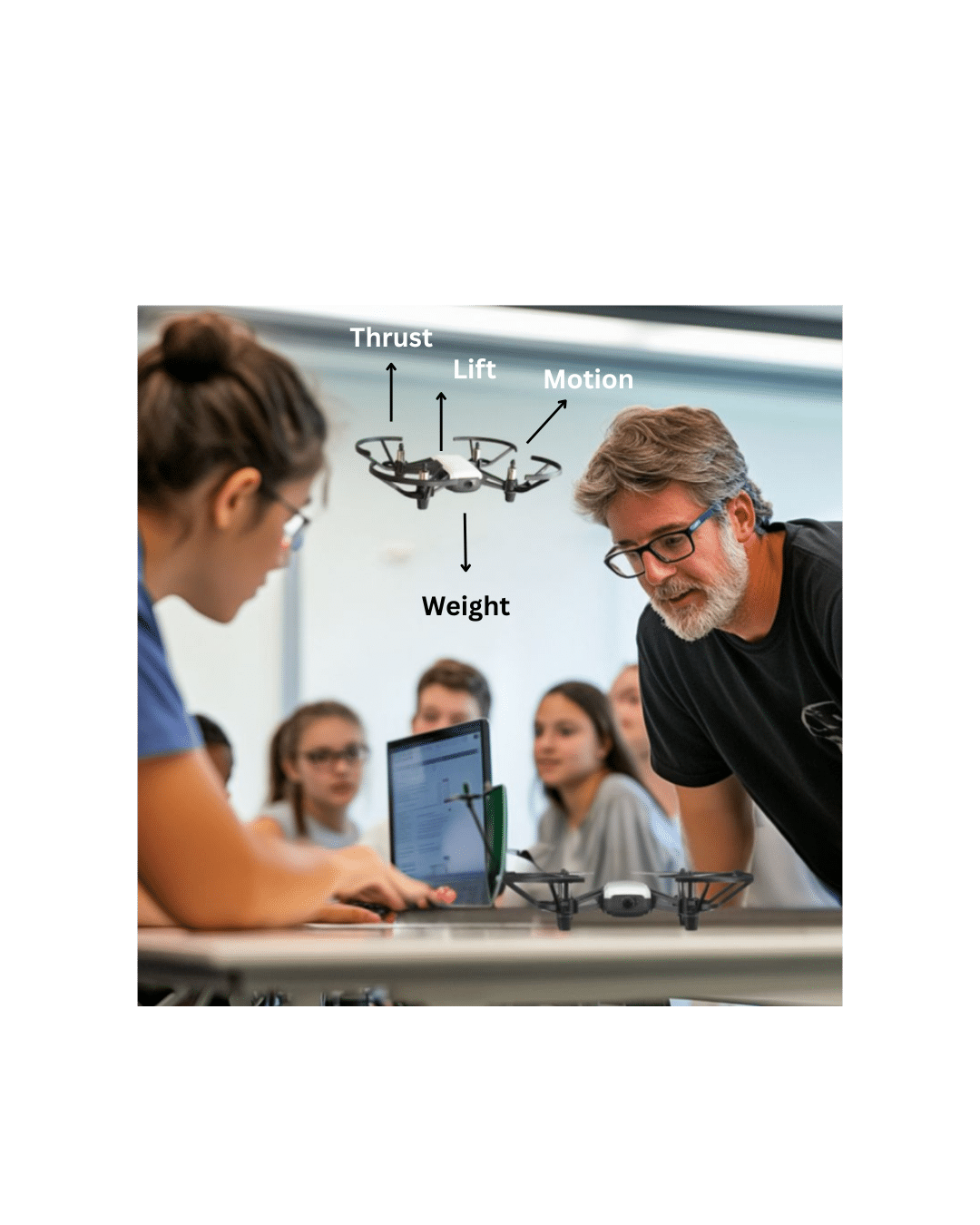

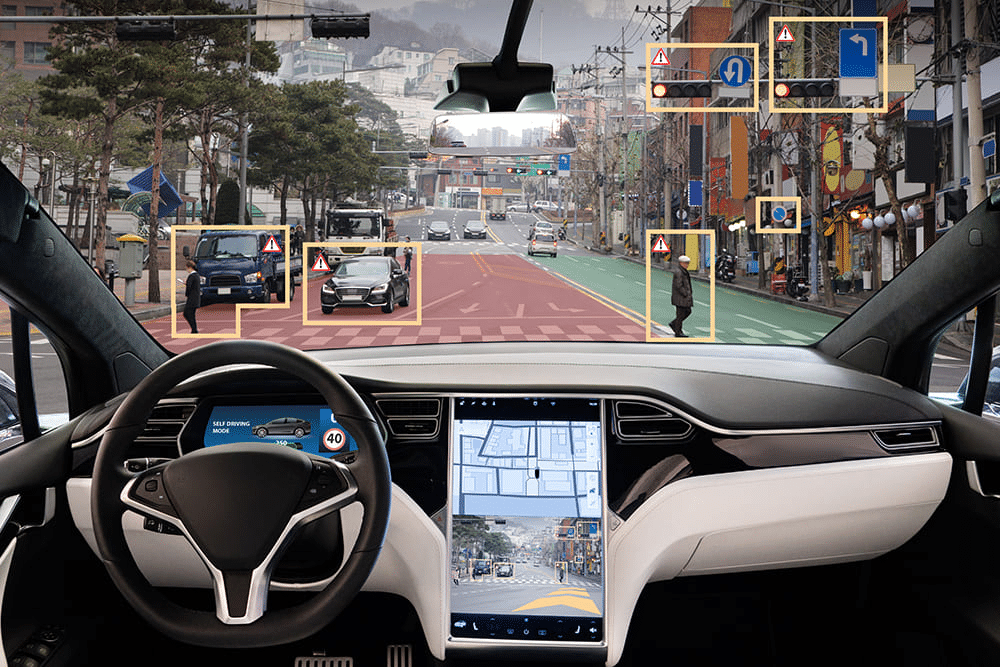

Whether it is robot dogs climbing uneven terrain or a car avoiding a pedestrian, the decision-making starts with sensors. Cameras, LiDAR, GPS, ultrasonic rangefinders, gyroscopes, and IMUs feed real-time data into onboard processors. The machine interprets this data to map its surroundings, detect obstacles, and plan movement.

In self-driving cars:

- LiDAR creates a 3D map of the environment.

- Radar gauges distances and speeds of nearby objects.

- Cameras detect lane markings, signs, and traffic lights.

- GPS and IMUs help localize the vehicle’s position and orientation.

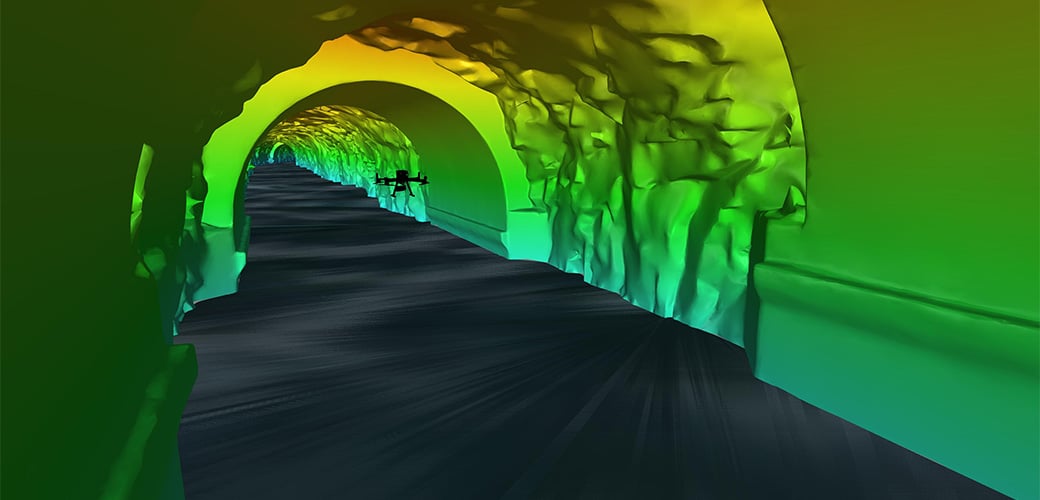

In Robot Dogs:

- Depth cameras help the robot understand the 3D structure of its surroundings.

- Force sensors in the legs adjust walking patterns to different surfaces.

- Proximity and vision sensors allow the robot to avoid collisions and climb stairs.

These systems must fuse sensor data continuously to operate safely, teaching us the value of real-time processing and sensor integration.

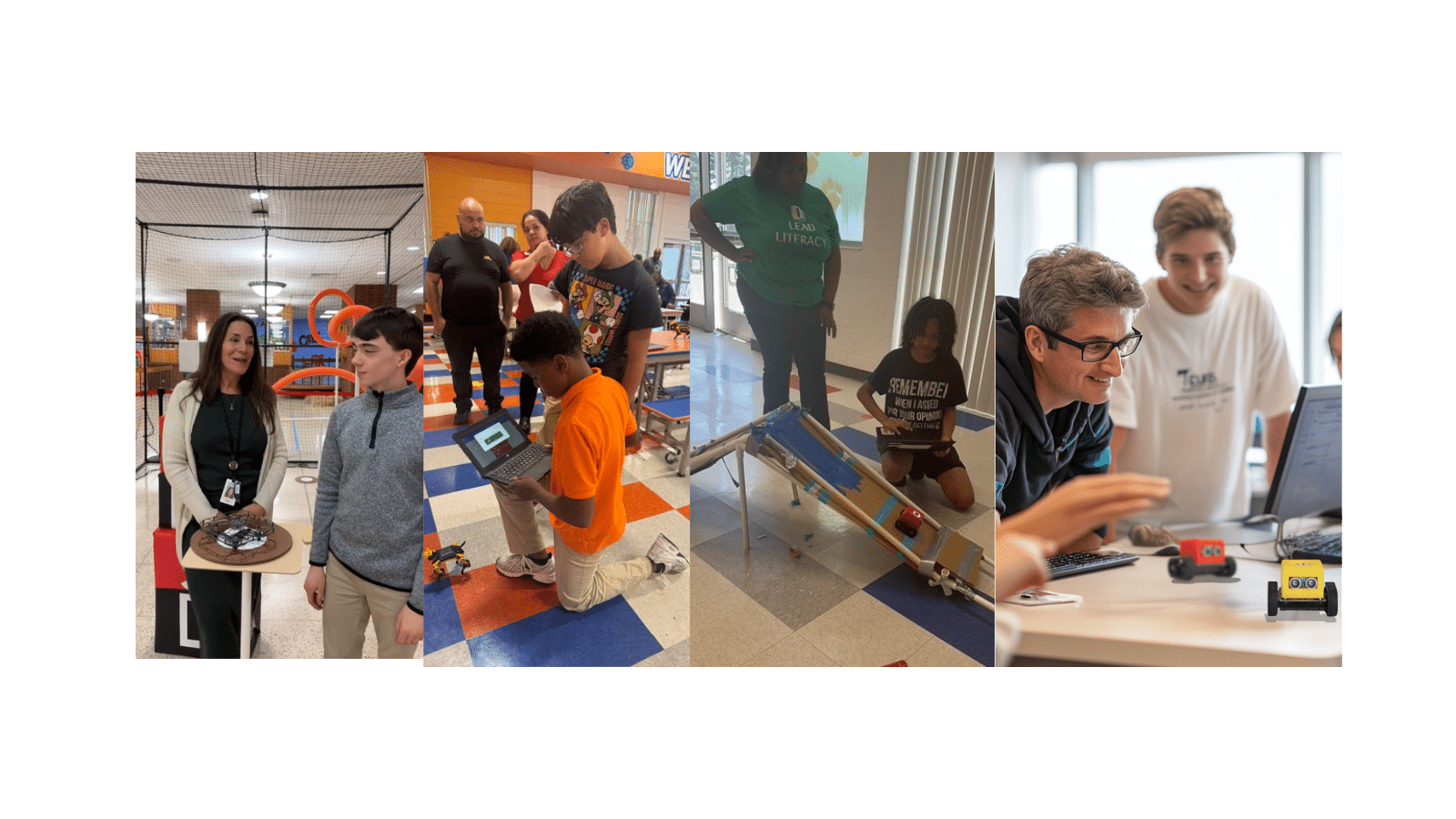

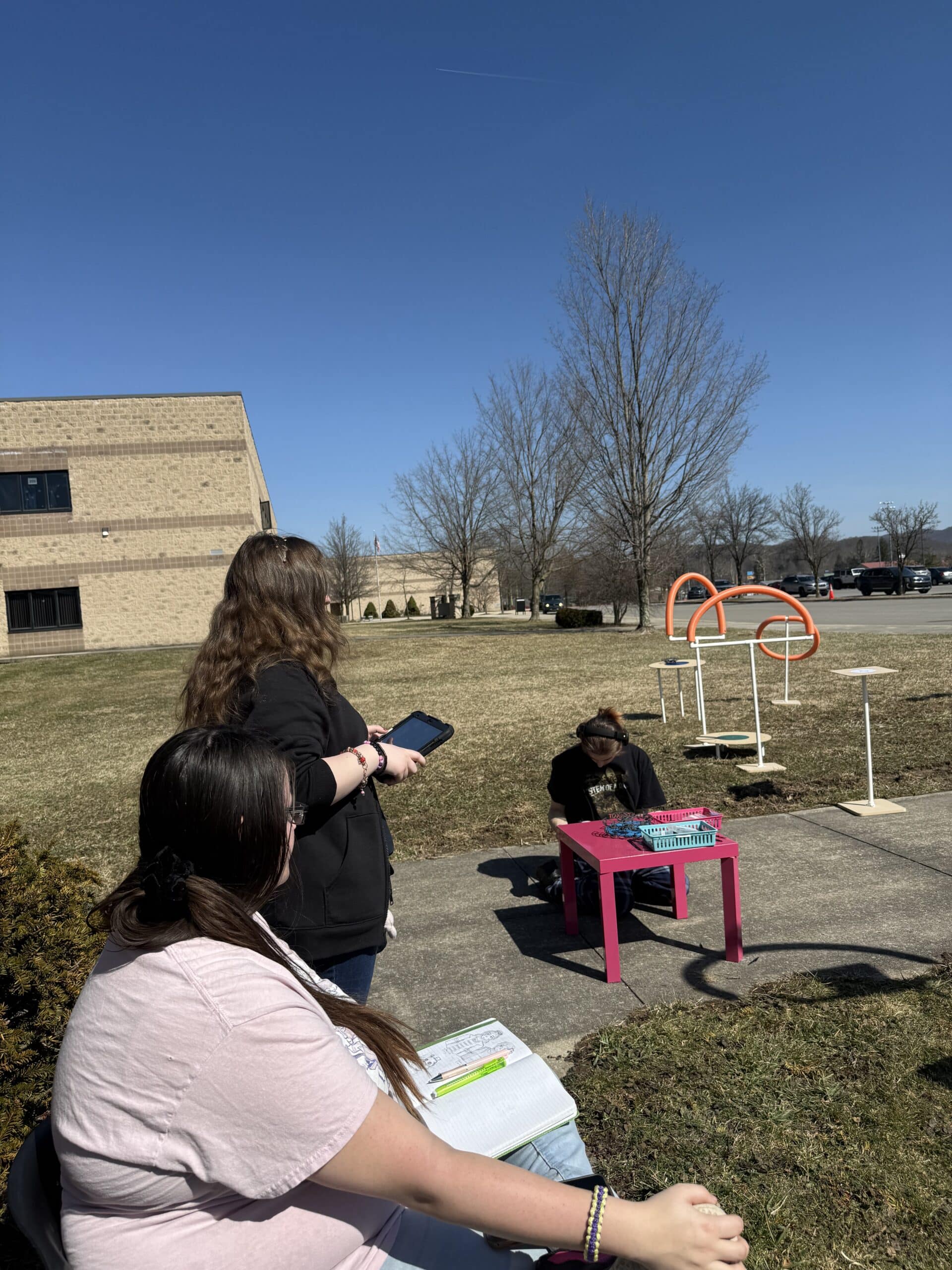

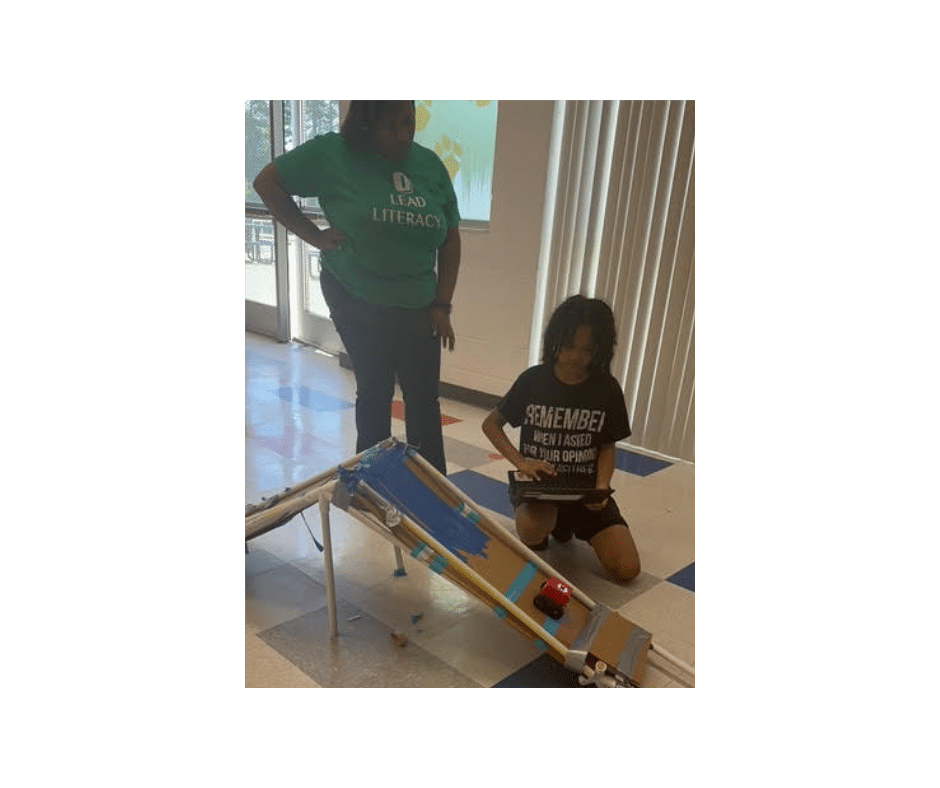

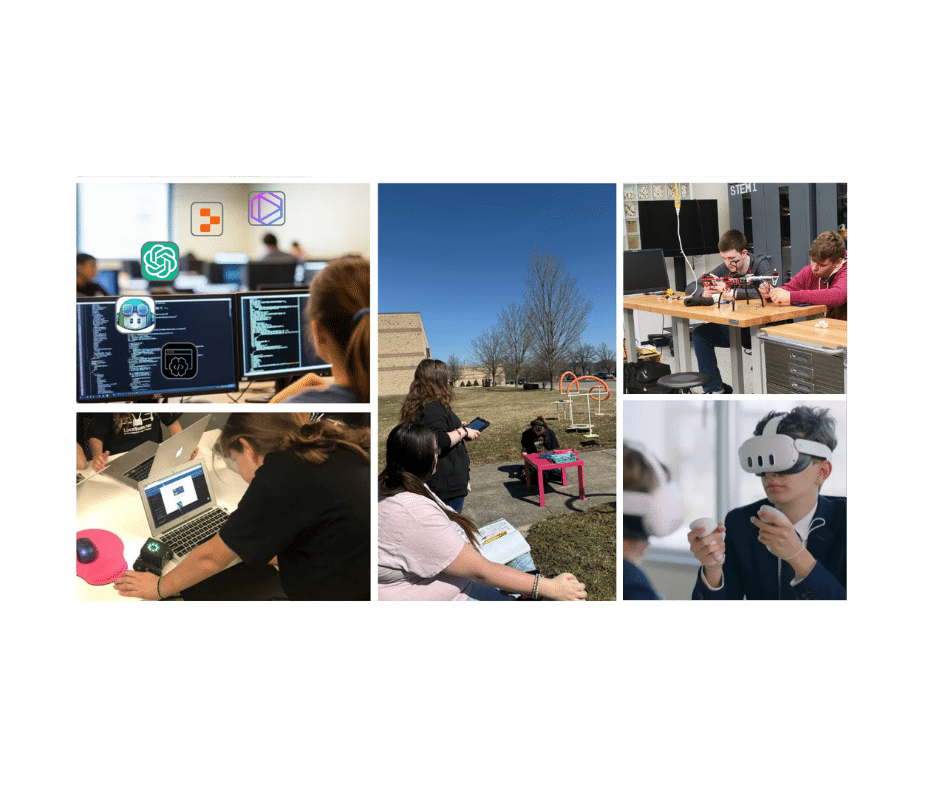

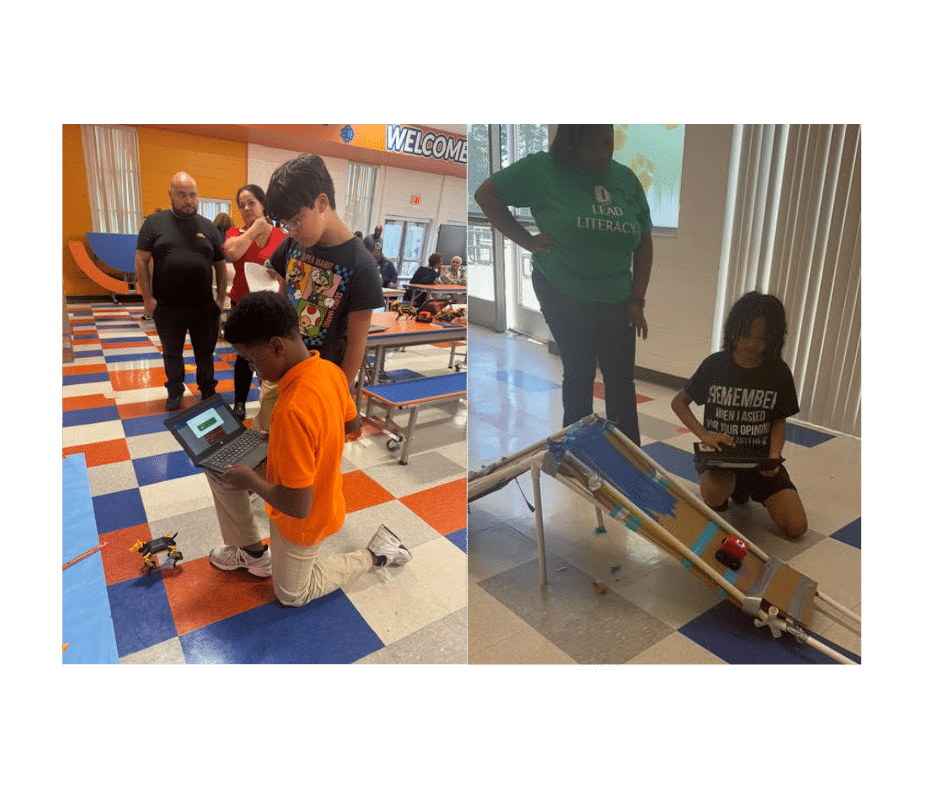

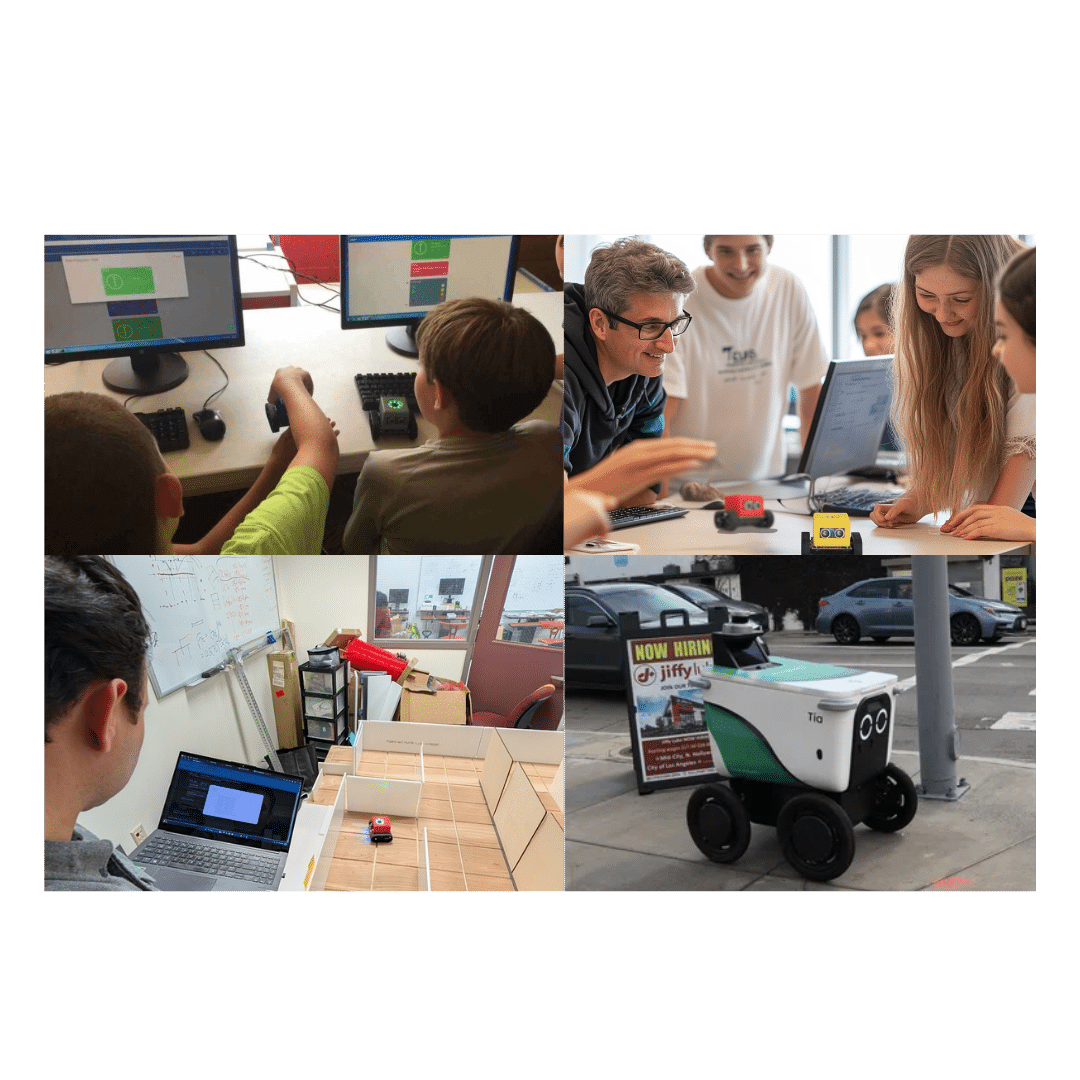

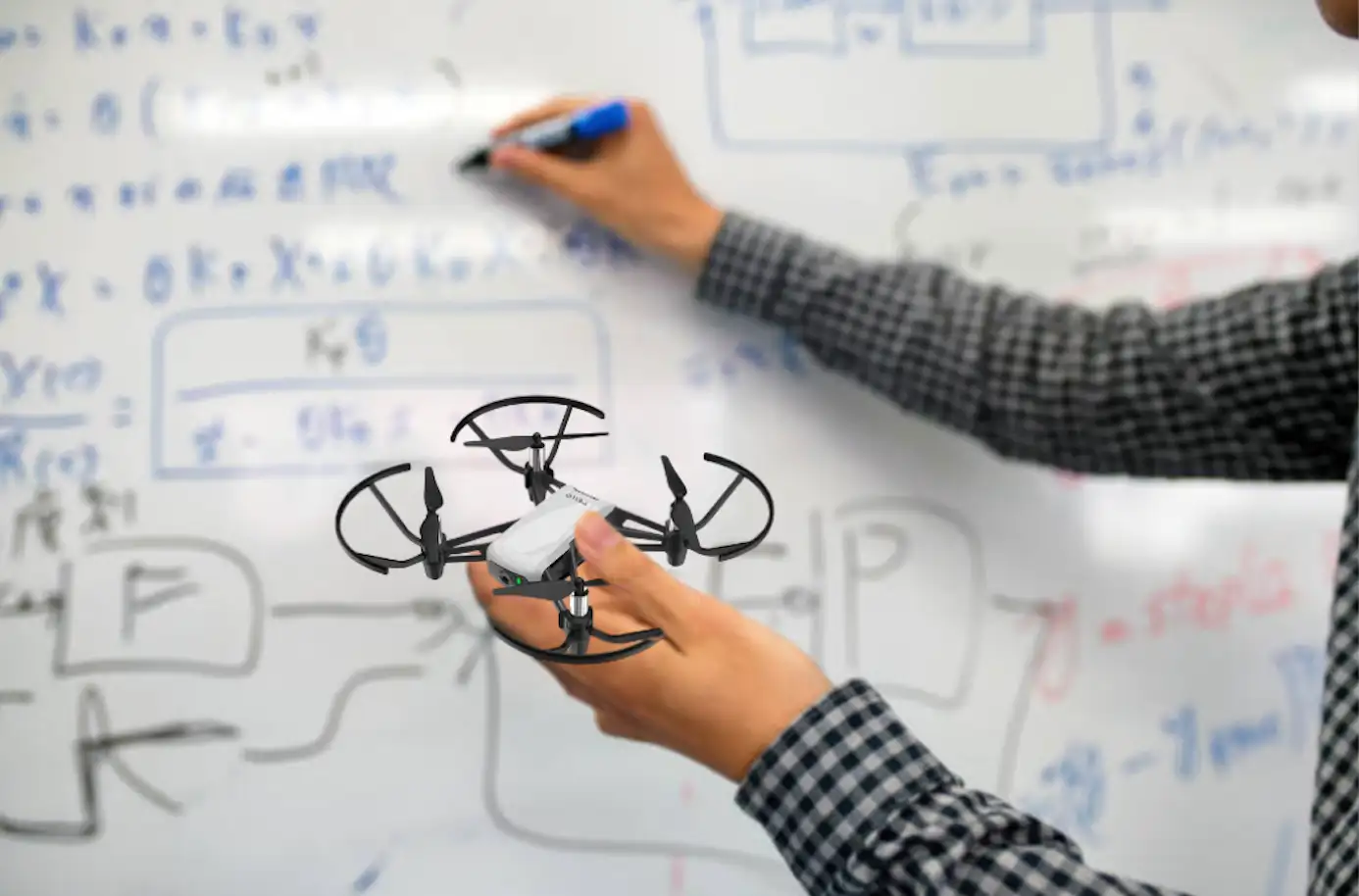

Bringing Sensor Science Into the Classroom

Understanding how sensors enable autonomy is an ideal entry point for project-based STEM learning. Sensor-based learning gives students a foundation in systems thinking: understanding how inputs (sensor data) connect to processing (coding and logic) and outputs (movement and action). It’s a powerful way to develop core STEM skills, including:

- Data analysis and interpretation

- Systems integration

- Real-time decision-making

- Robotics and AI applications

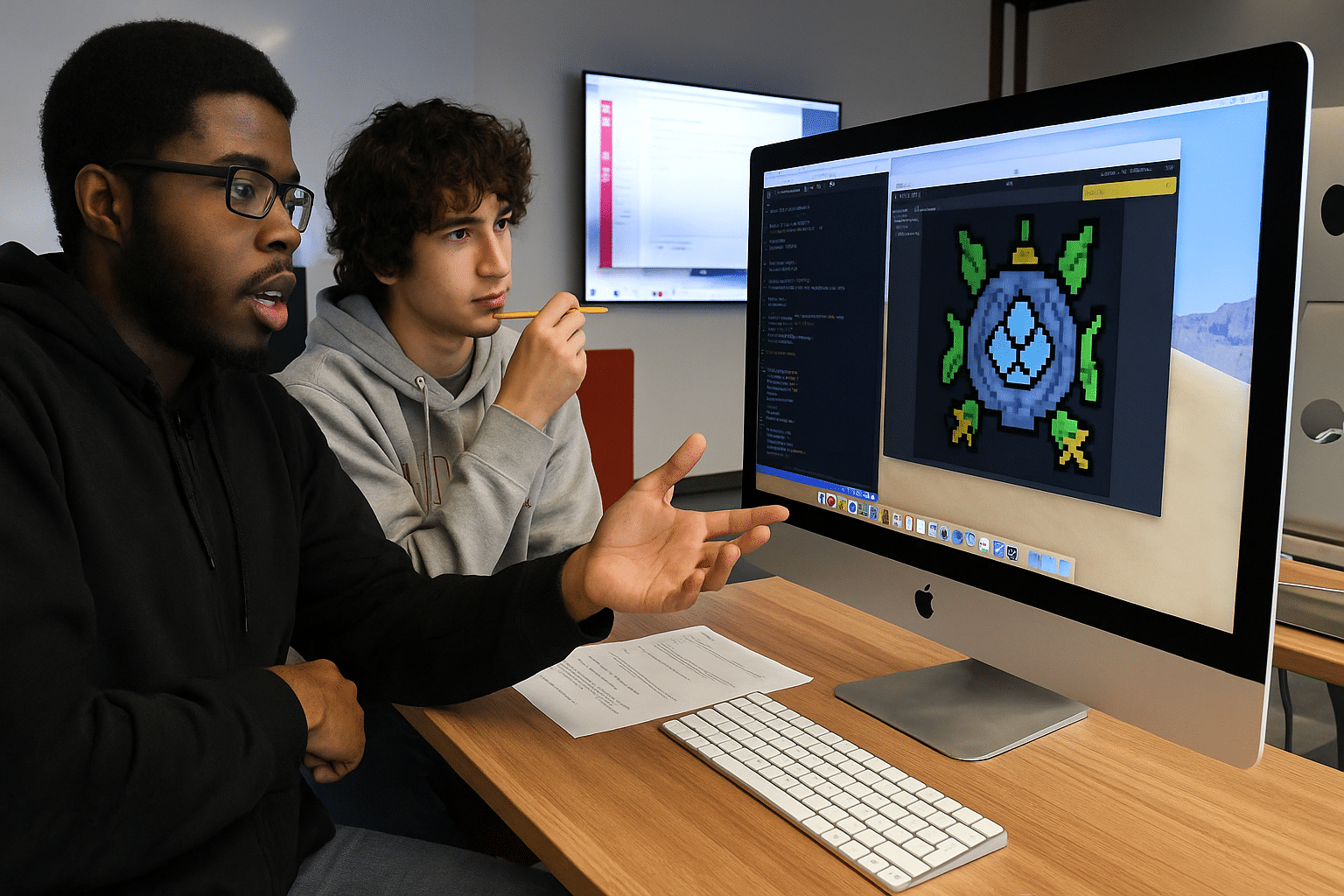

Why Physical Computing with Sensors Matters in Computer Science Education

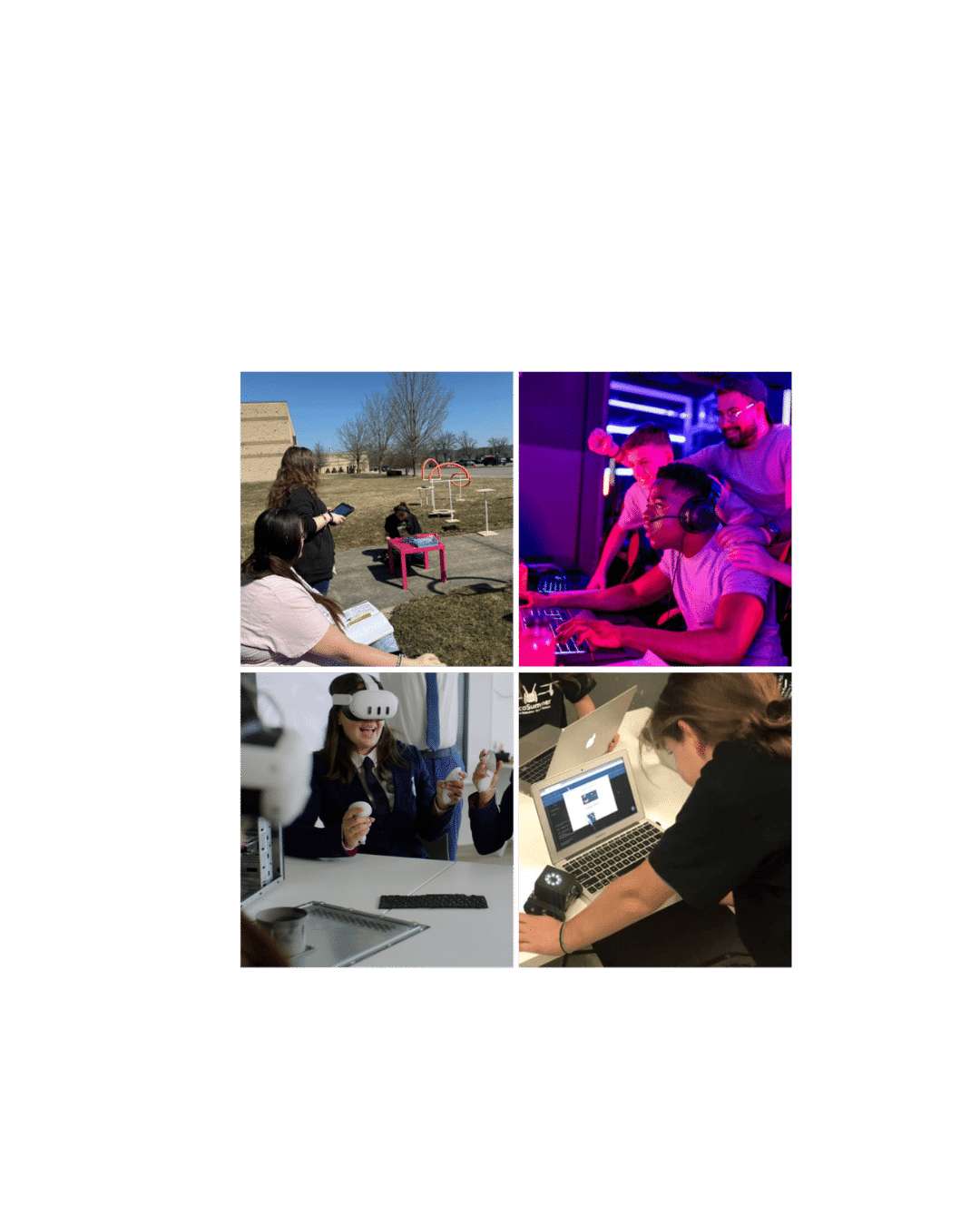

In computer science, it’s one thing to write code and another to see that code come alive through real-world interaction. That’s the power of physical computing with sensors. It bridges the gap between abstract algorithms and tangible outcomes, giving students a deeper understanding of how software controls hardware in real time.

When students use sensors to collect data, respond to environmental changes, and drive robotic behavior, they are engineering interactive systems. This experience helps build critical CS concepts such as:

- Input/Output Processing: Understanding how data flows between processors, sensors, and actuators

- Event-Driven Programming: Writing code that reacts to changes in sensor input

- Algorithm Design: Developing logic to guide autonomous behavior

- Debugging and Iteration: Refining code based on real-world performance

It also introduces students to embedded systems, AI, and robotics, making computer science more engaging and applicable to real-world tech.

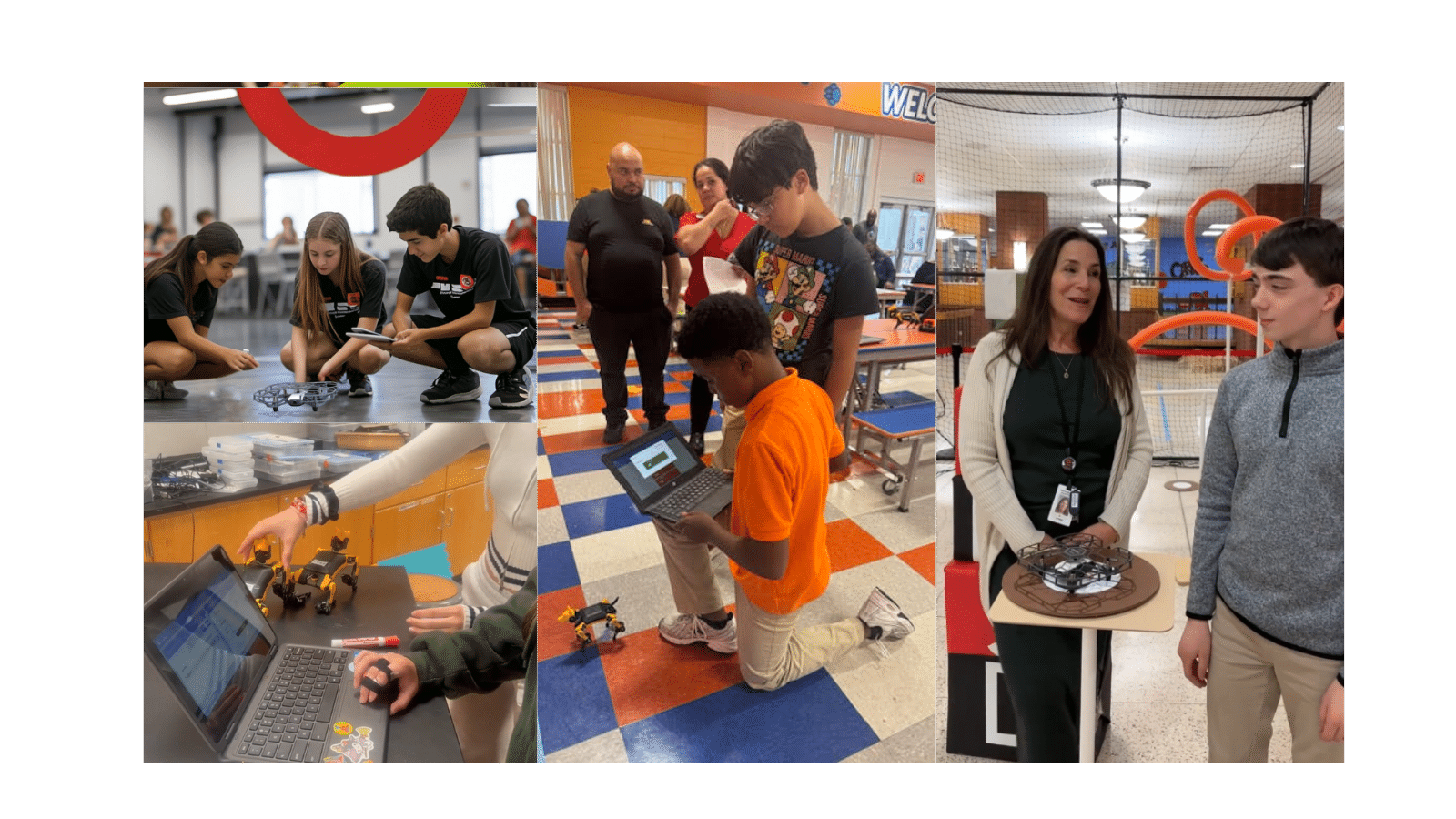

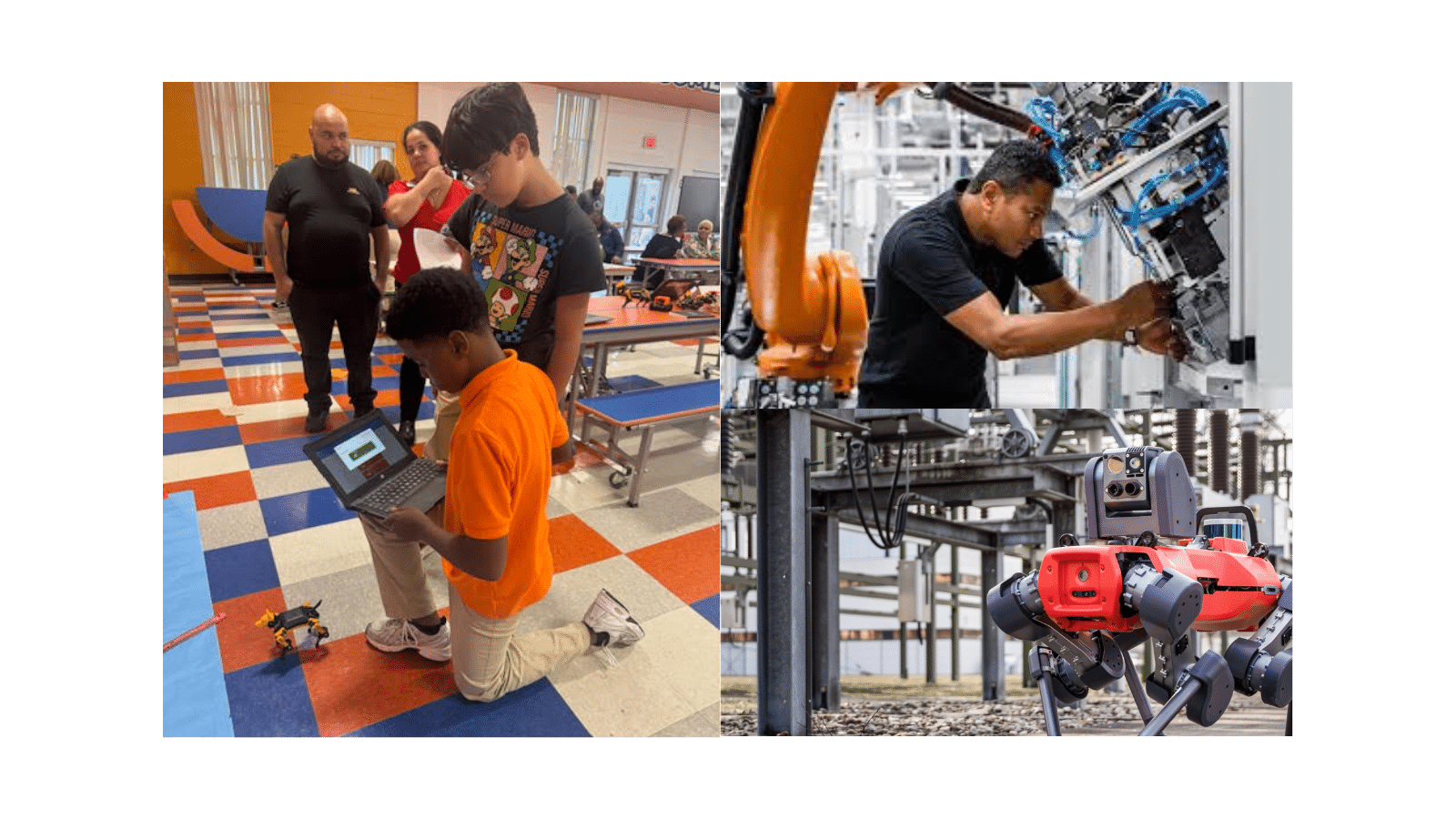

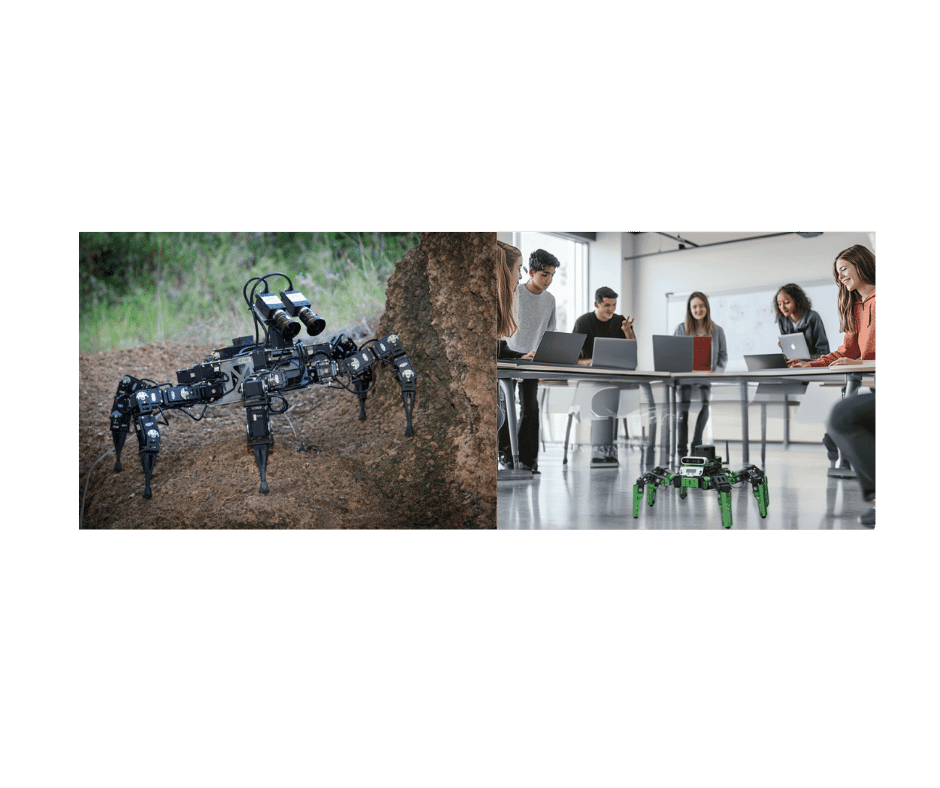

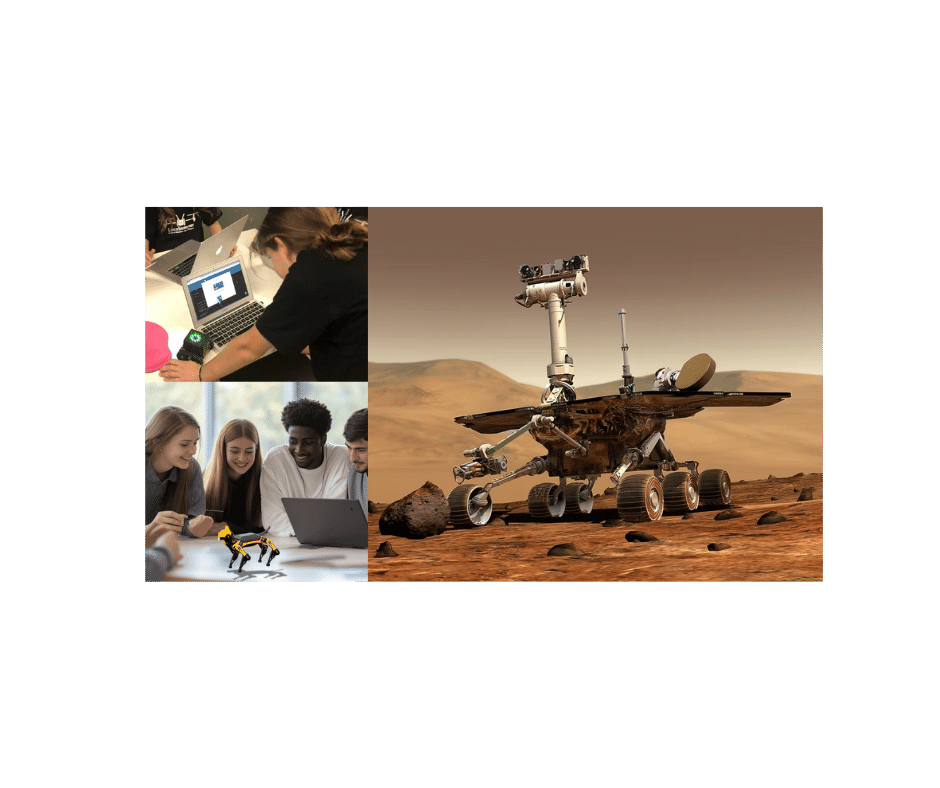

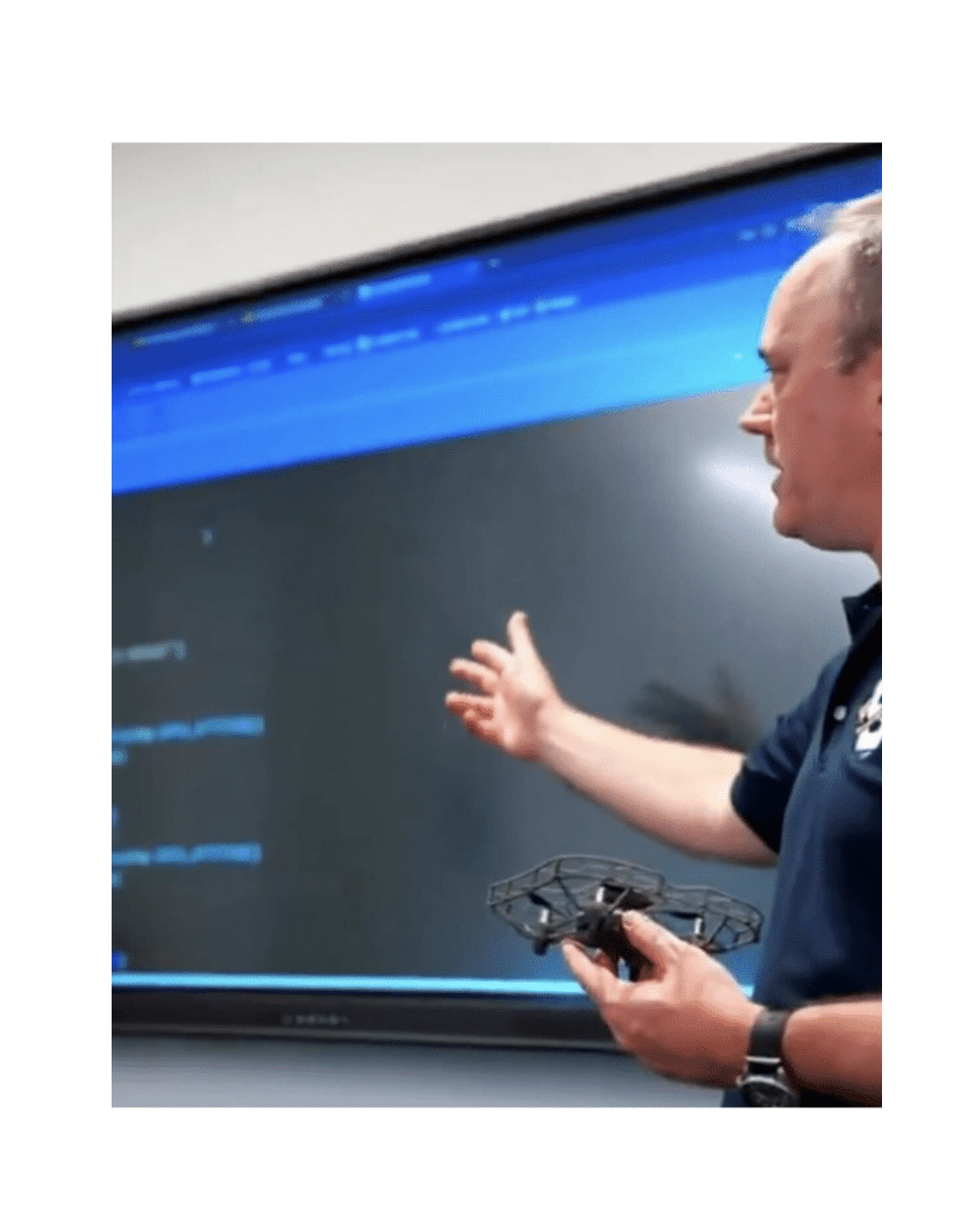

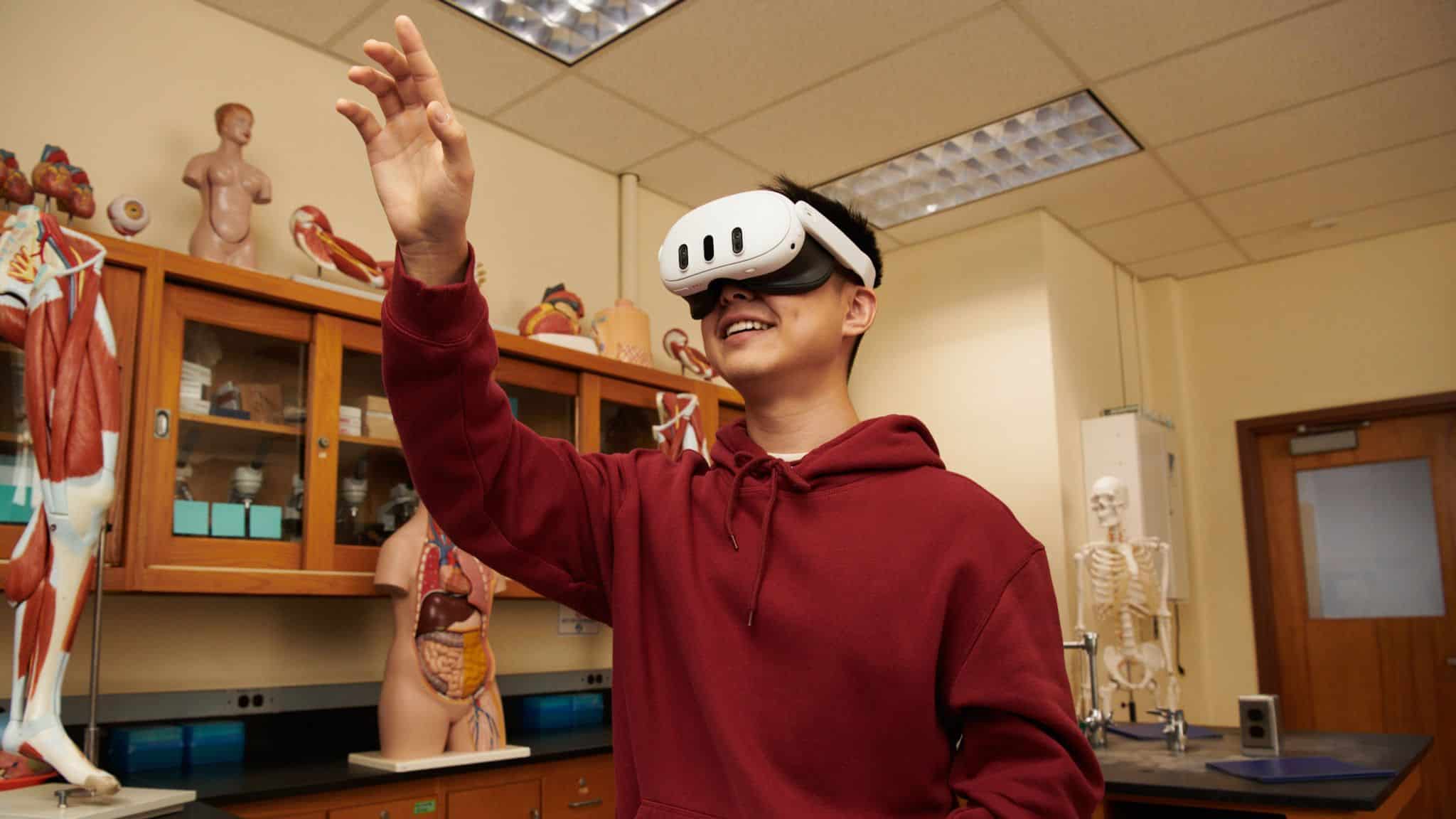

Meet LocoScout: A Robot Dog Built for STEM Exploration

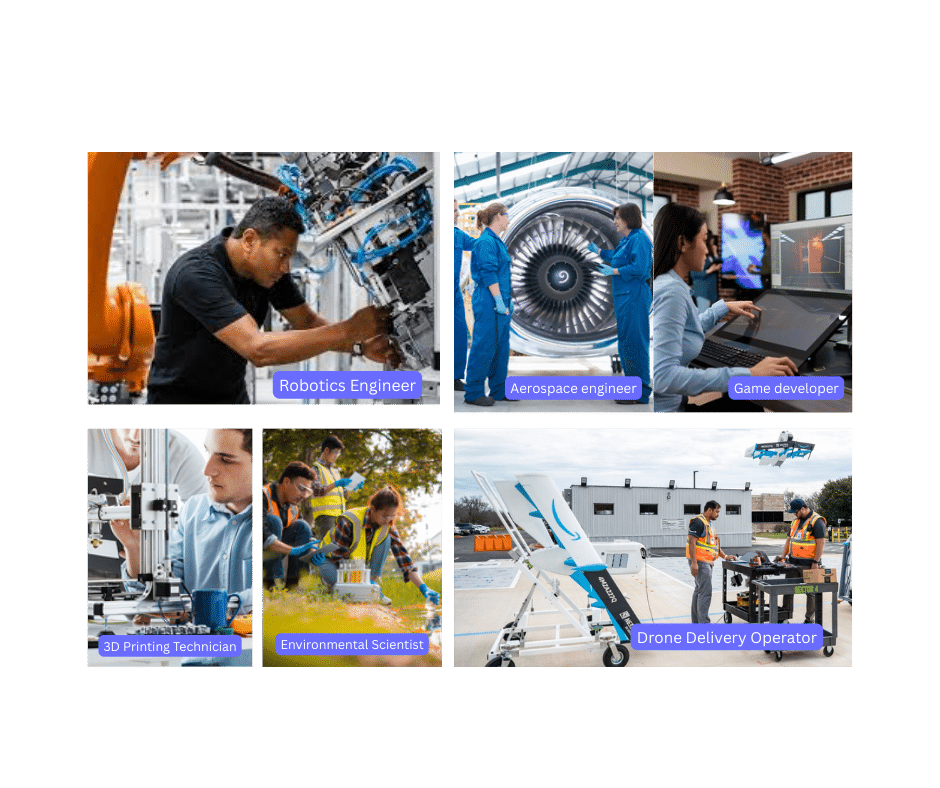

Curious how robot dogs like Boston Dynamics work? LocoScout brings a quadruped robotics system to the classroom with an agile, programmable platform designed for hands-on learning. Students can program lifelike walking, trotting, and turning behaviors while integrating real-time sensor responses and custom gait control.

With LocoScout, students learn how to program a robot, test, and refine those movements. From behavioral programming to environmental interactions and adaptive routines, LocoScout invites students to explore the complex world of robotic balance, locomotion, and decision-making.

Whether you are preparing students for STEM career exploration or introducing robotics in a creative, engaging way, LocoScout delivers a powerful, project-ready solution, with a comprehensive STEM education curriculum to support every step.