What if building a piece of furniture was as simple as saying what you wanted?

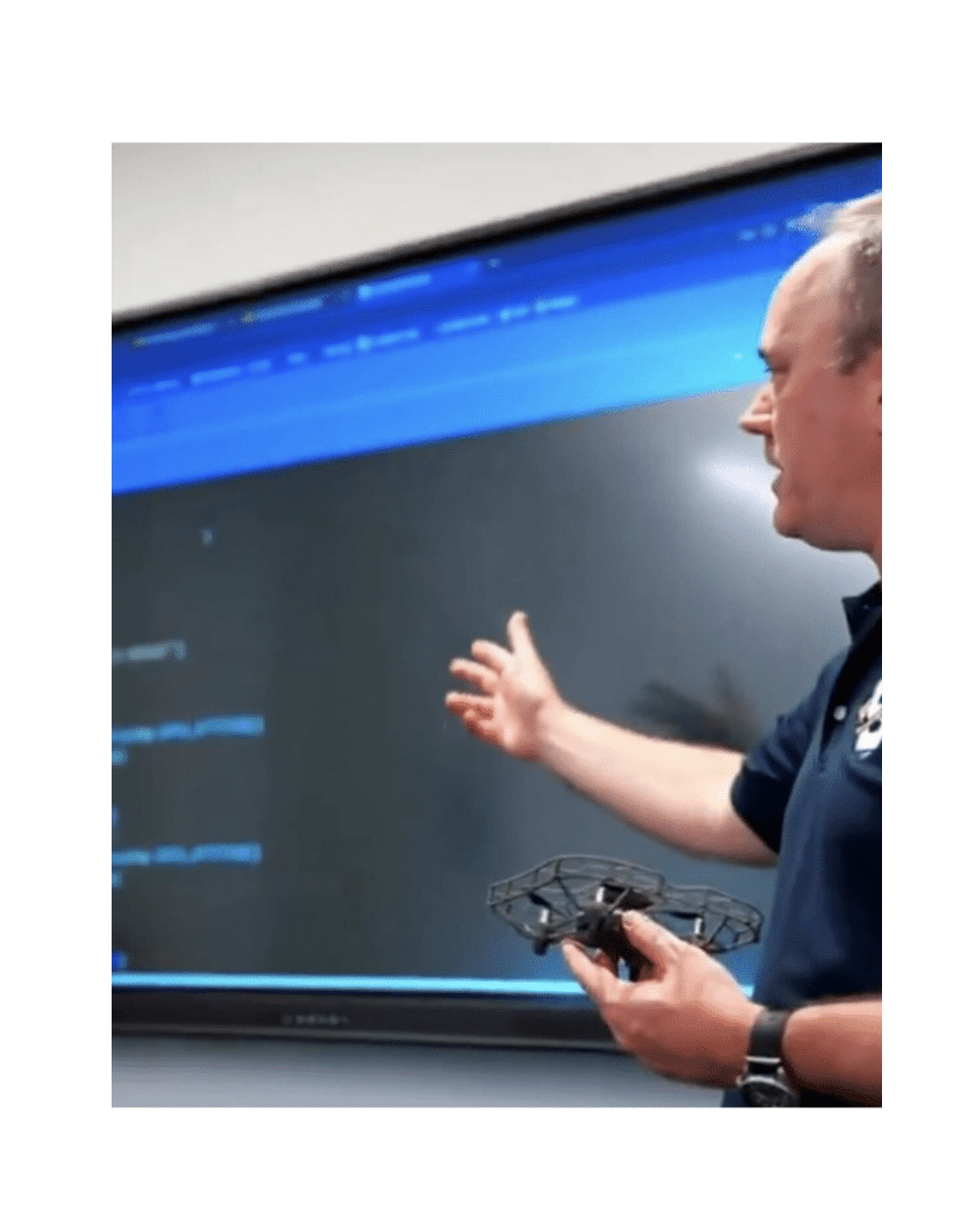

That is the question researchers at Massachusetts Institute of Technology are actively answering with a new AI-driven “speech-to-reality” system. Their work allows a person to describe an object out loud, such as “I want a simple stool,” and watch a robotic arm assemble it within minutes.

This research is about collapsing the distance between human intent and physical creation, using robotics as the bridge.

How the Speech-to-Reality System Works

The system developed at MIT combines multiple advanced technologies into a single workflow:

- Speech recognition and natural language processing

A large language model interprets spoken input and translates it into design intent. - 3D generative AI

The system generates a digital mesh of the object described by the user. - Robotic assembly planning

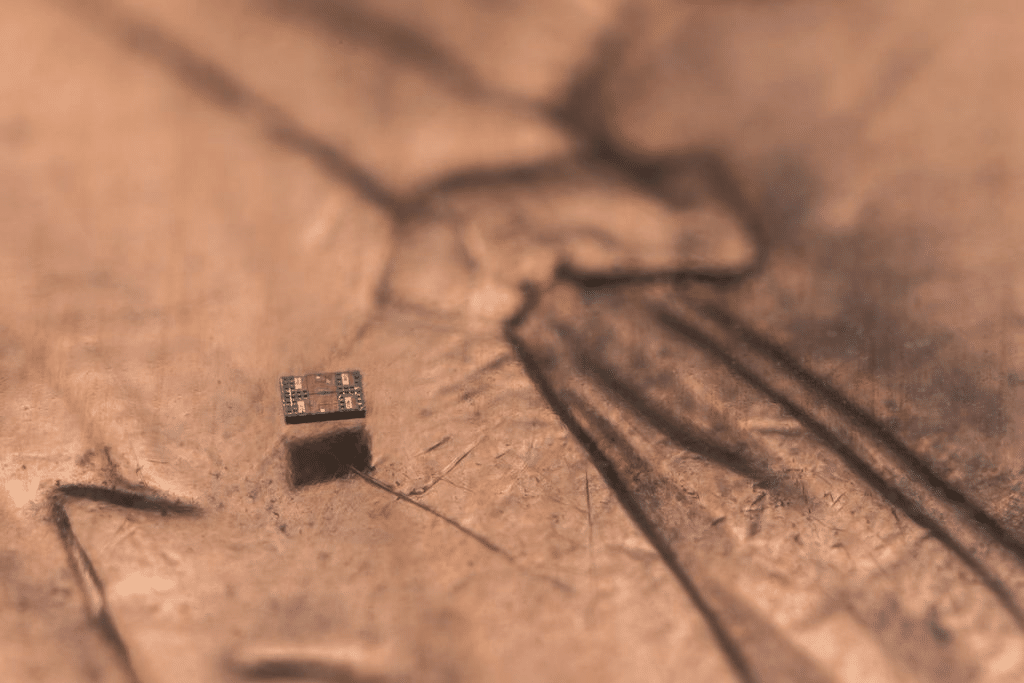

An automated sequence and motion path are generated so the robotic arm can assemble the object efficiently and safely - Voxelization and geometric processing

The mesh is broken into modular building blocks and adjusted to meet real-world fabrication constraints such as stability, overhangs, and connectivity.

.

Using this process, the MIT team has already built stools, shelves, chairs, tables, and decorative objects like animal figures. Unlike 3D printing, which can take hours or days, these objects are assembled in minutes.

Why This Matters Beyond the Lab

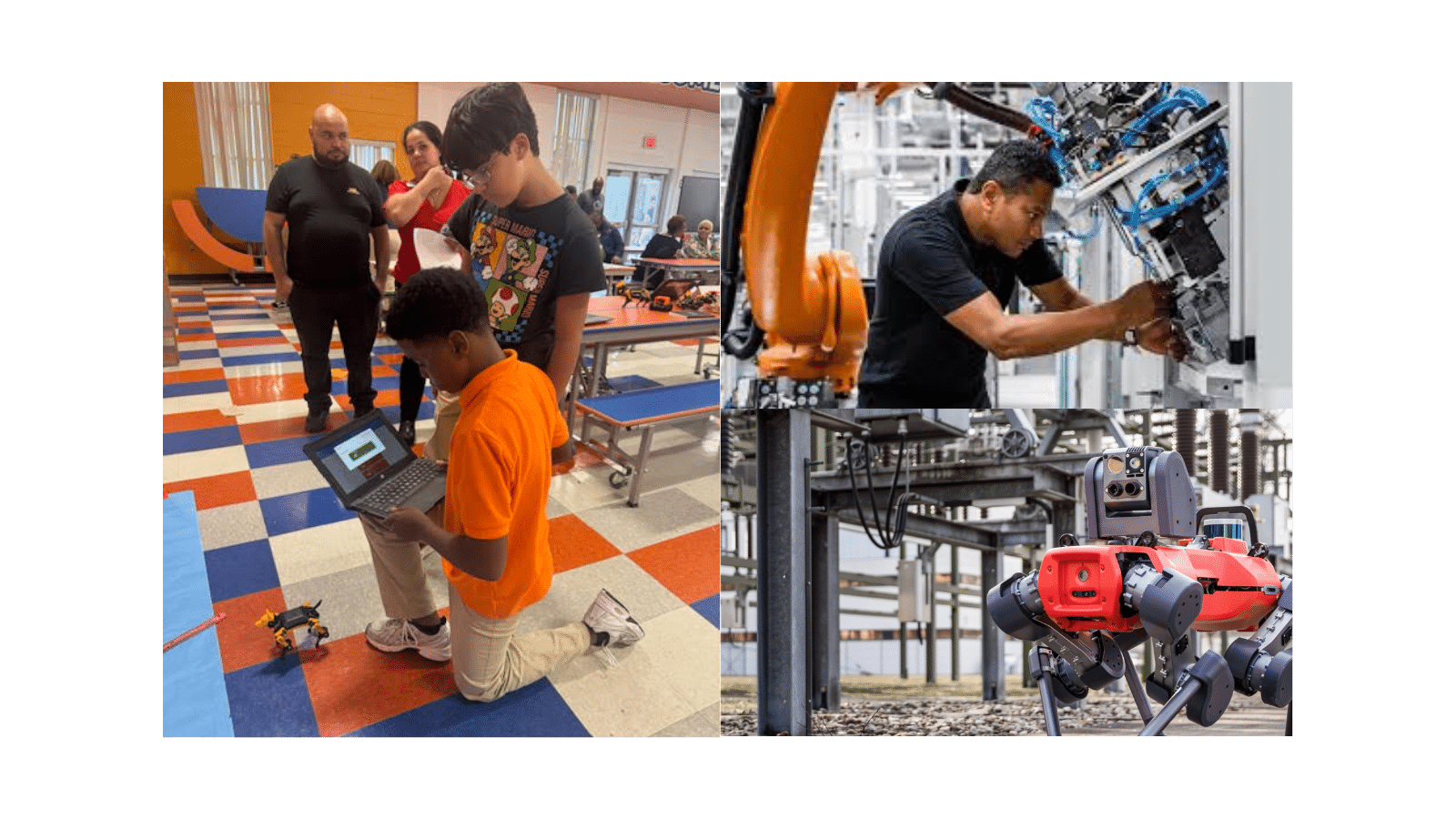

This project sits at the intersection of AI, robotics, and manufacturing, but its implications extend far beyond research environments.

By allowing people to create physical objects without knowing CAD software or robotic programming, the system lowers the barrier to participation in design and fabrication. It reframes robotics as a collaborator rather than a tool reserved for specialists.

The modular nature of the components also points toward more sustainable manufacturing models. Objects can be disassembled and rebuilt into something new instead of discarded. A shelf could become a table. A sofa could become a bed. The materials remain in circulation.

At its core, this work explores how humans and robots can co-create physical systems using natural communication methods.

A Clear Signal for Robotics Education

For educators, this research highlights a shift that is already underway.

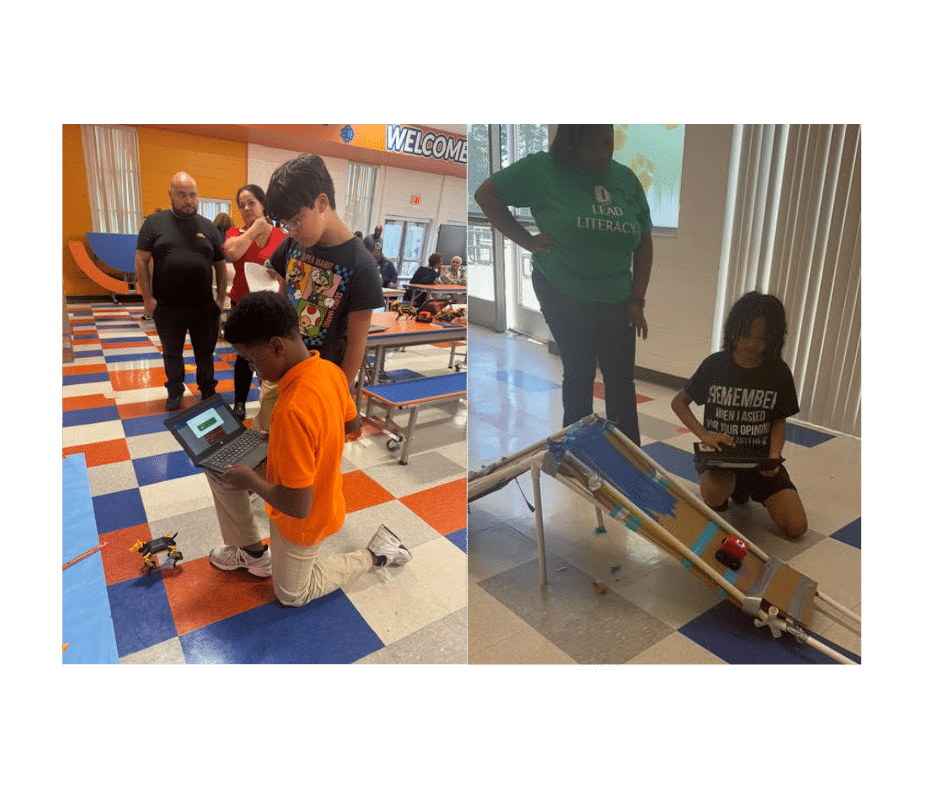

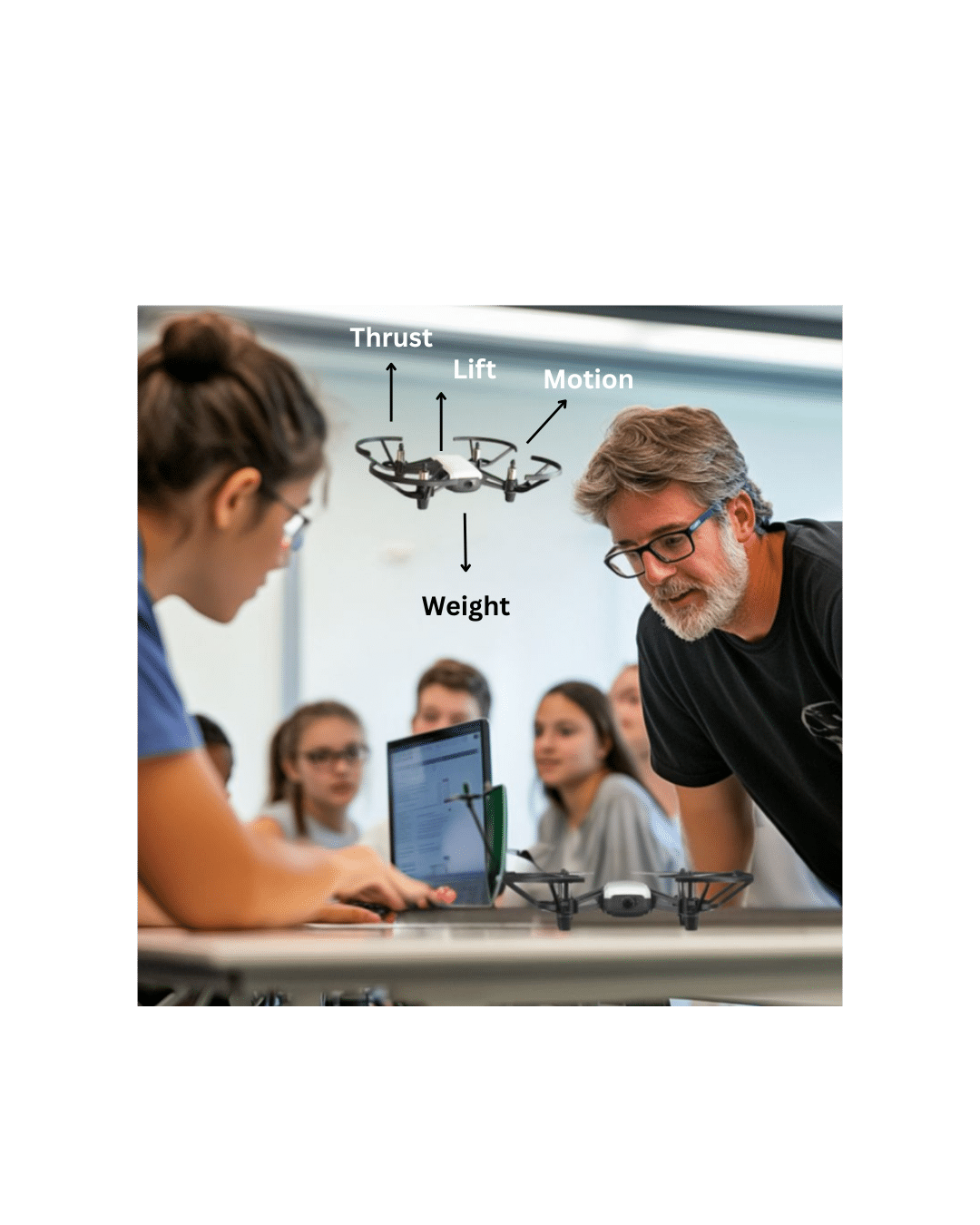

Students are no longer just learning how to code a robot to follow instructions. They are learning how systems translate human intent into action, how AI decisions affect physical outcomes, and how mechanical constraints shape design choices.

Understanding this pipeline, from language to logic to motion, is becoming a foundational robotics concept. It blends computer science, mechanical engineering, AI, and systems thinking into a single learning experience.

This is the direction modern K12 robotics education is moving toward: connected, multidisciplinary, and grounded in real-world systems.

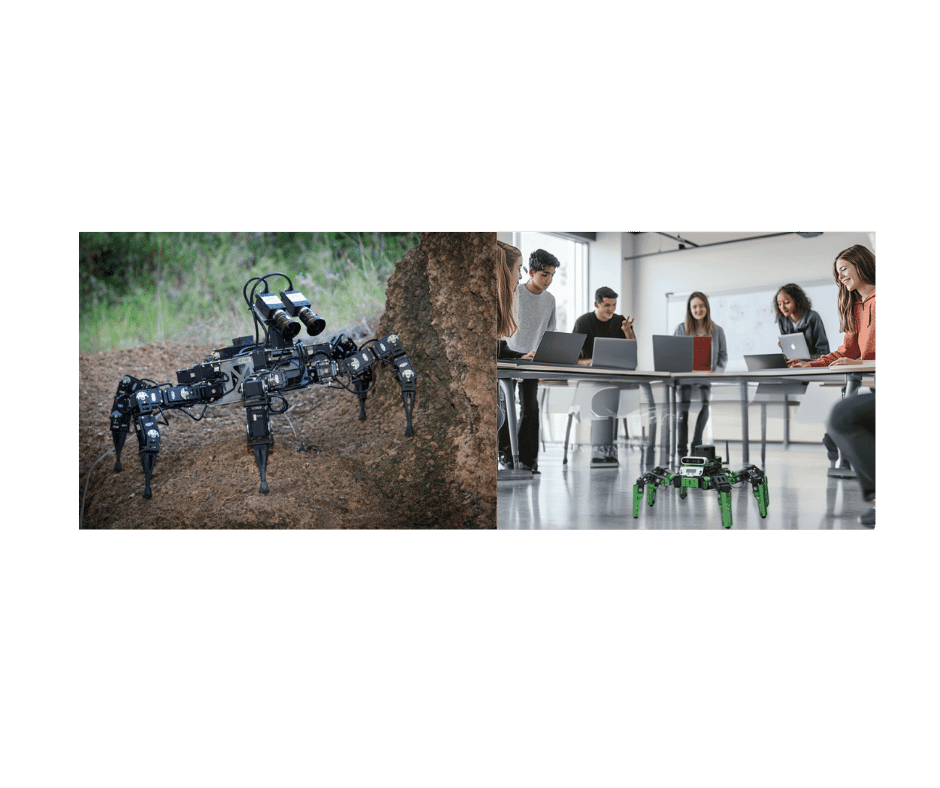

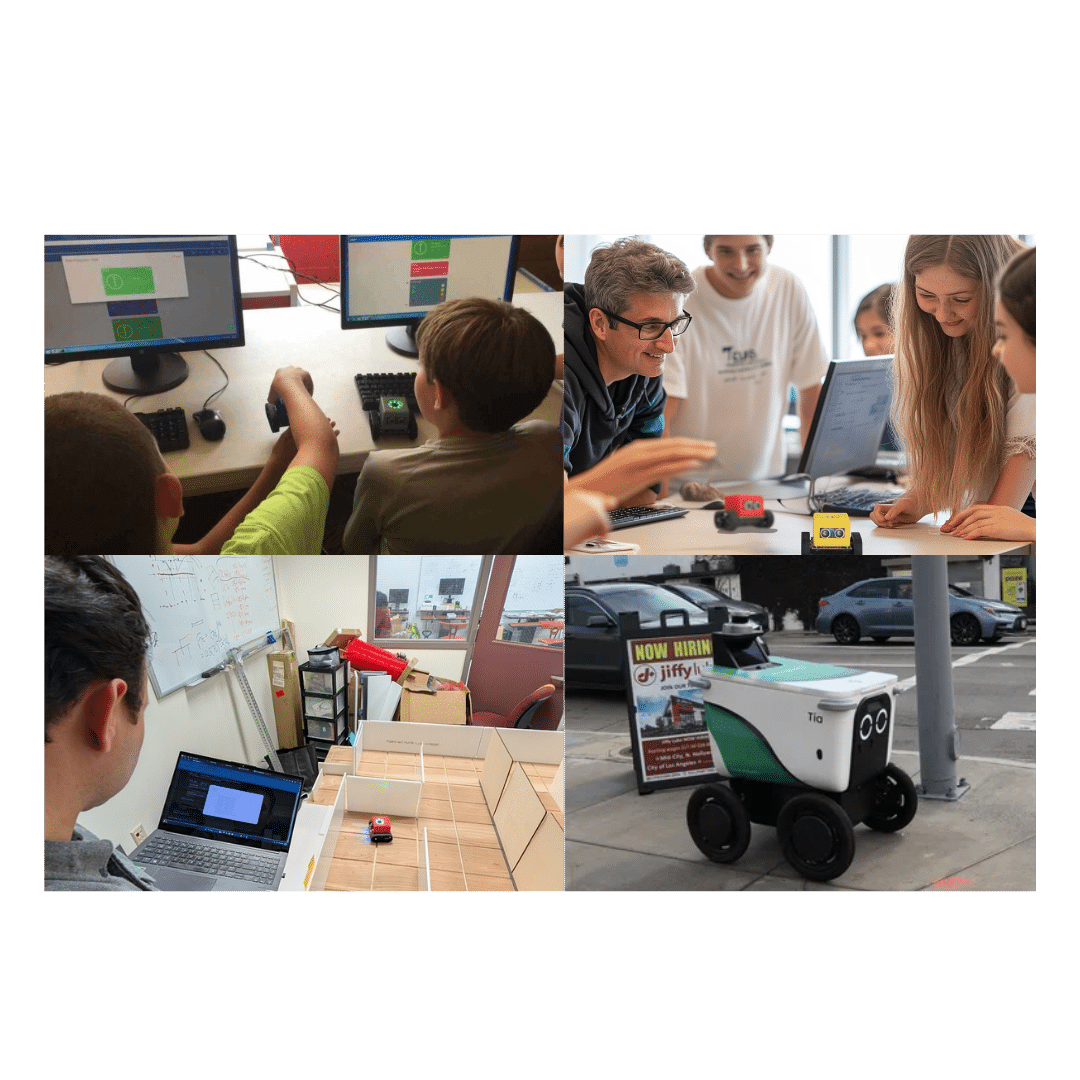

Where LocoRobo Fits In

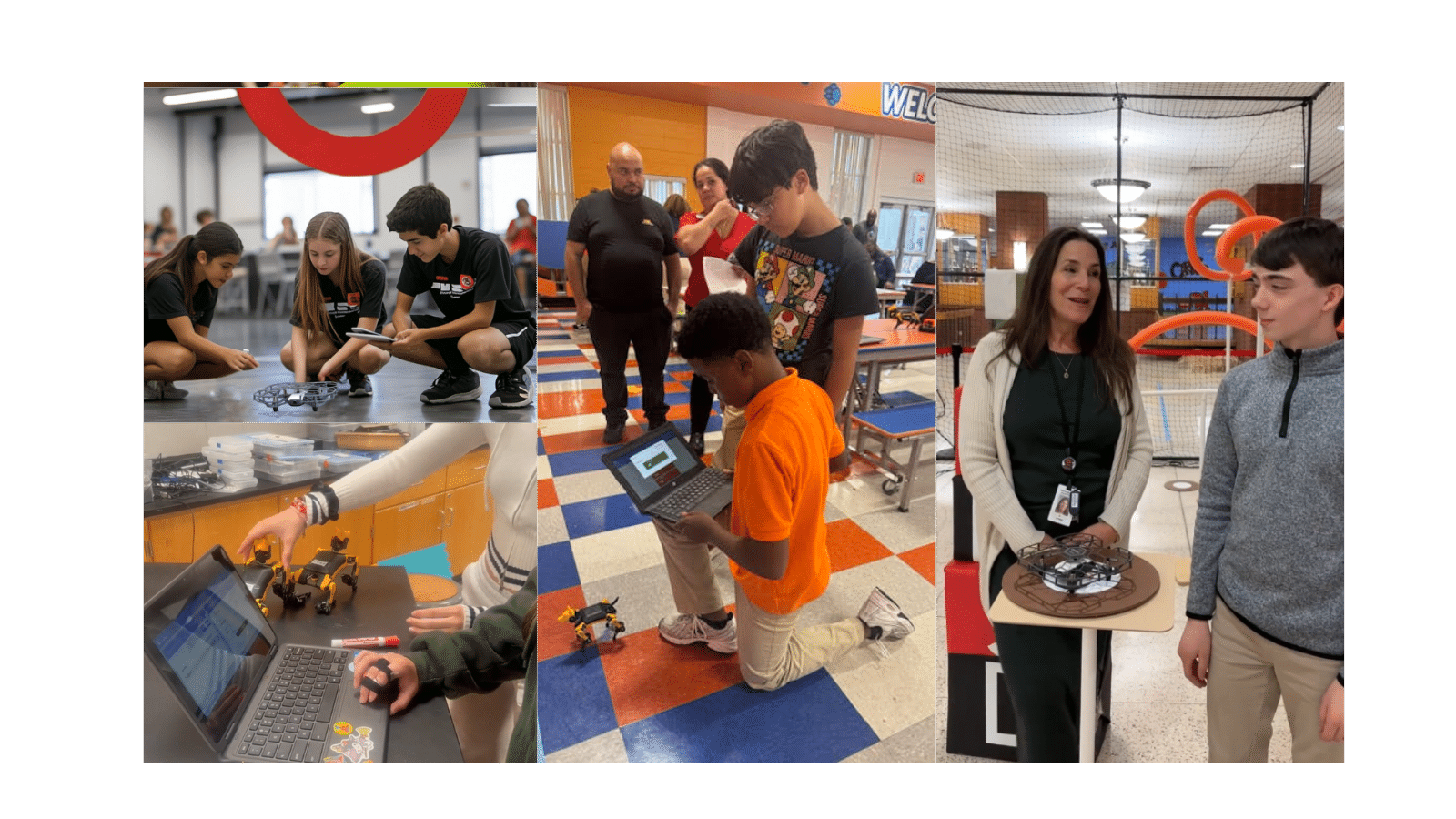

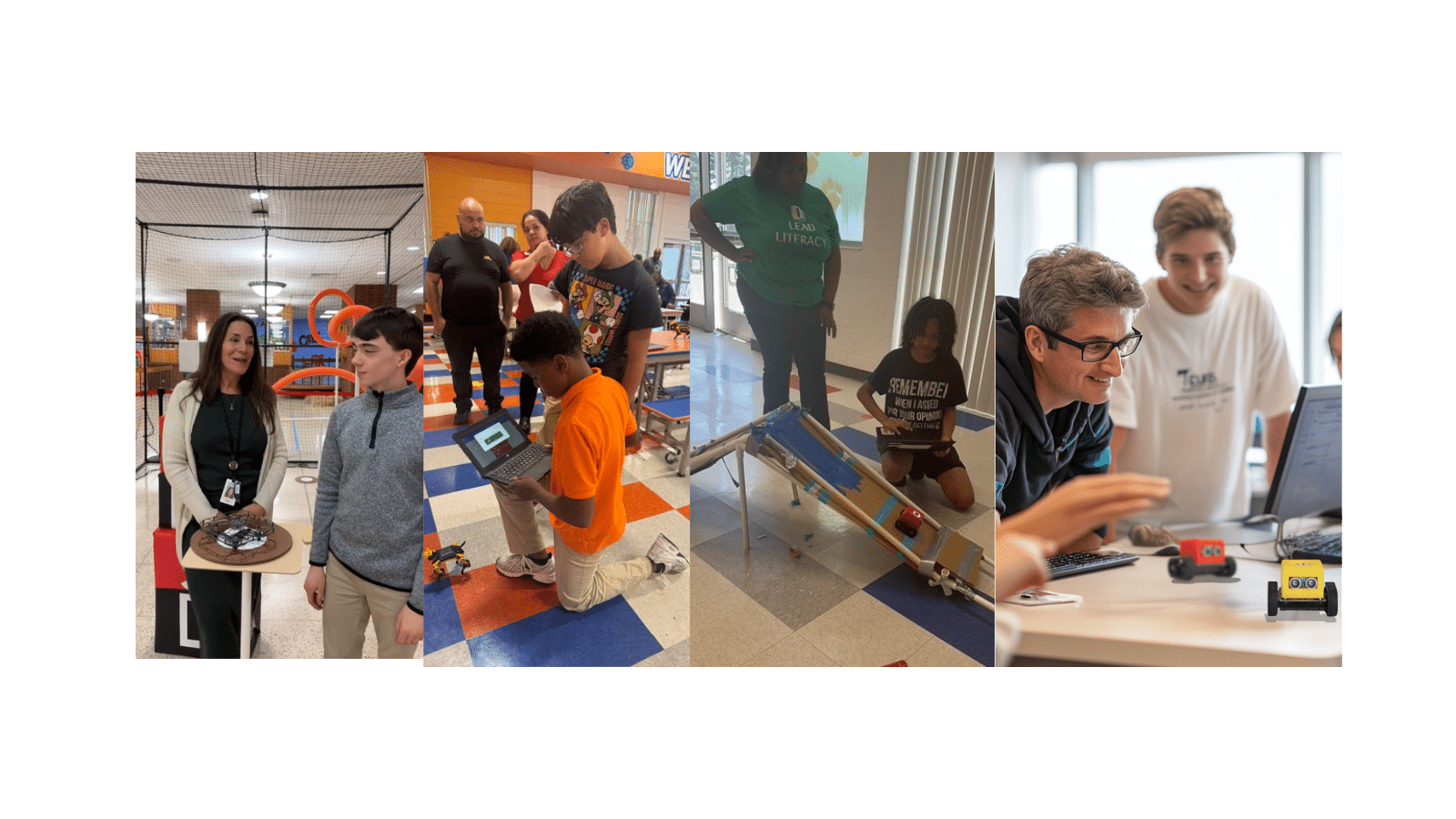

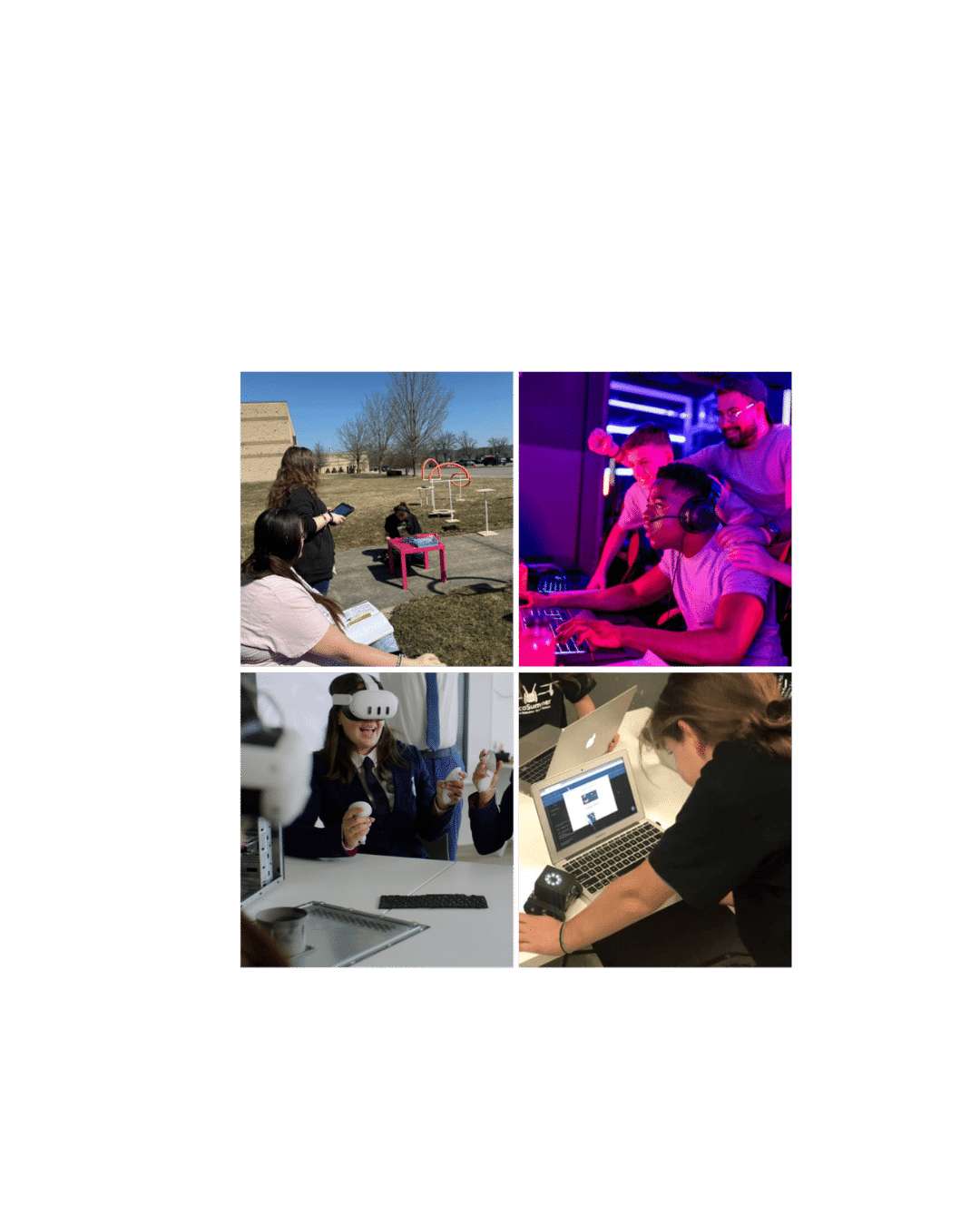

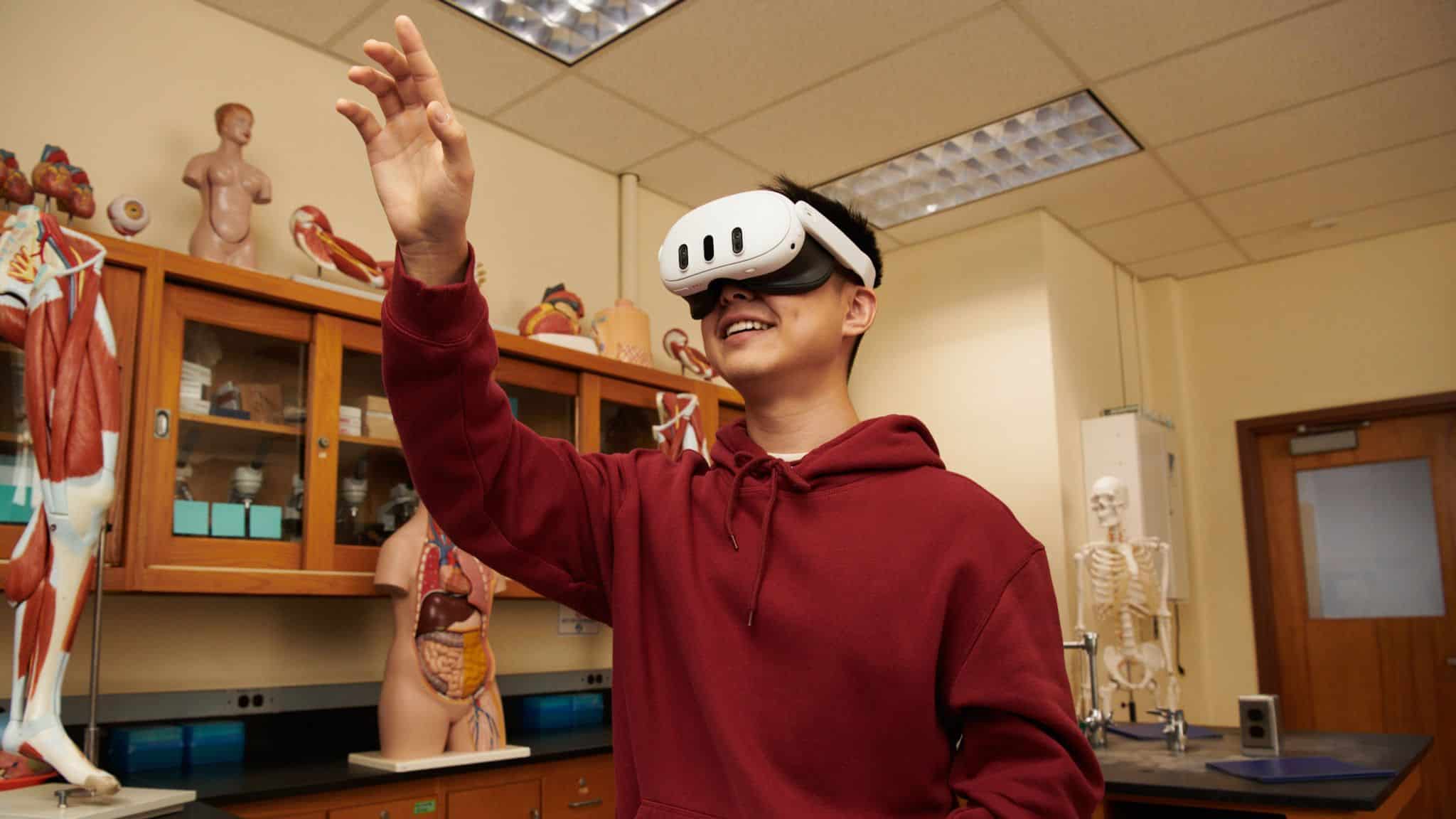

At LocoRobo, we design STEM robotics solutions that help educators bring these kinds of systems into their programs in practical, classroom-ready ways.

Our STEM robotics kits give students hands-on experience with:

- Robotic motion and control

- Sensors and real-time feedback

- AI-assisted decision making

- Mechanical design and assembly concepts

- Programming workflows that connect logic to physical outcomes

With robotics in education, students learn how instructions become actions, how systems respond to constraints, and how humans and robots work together to solve problems.

Research like MIT’s speech-to-reality system shows where robotics is headed. LocoRobo helps schools start building those skills today. Learn more about LocoRobo’s robotics solutions.